a large enterprise size a Kubernetes cluster for real-time inference on their customer-facing LLM product. We started with 64 H100 SXM GPUs across 8 nodes, all running vLLM in monolithic mode. The results were not where we need them to be. During prefill bursts the tensor cores hit 92% utilization. Ten milliseconds later, during decode, the same GPUs cratered to 28%. We were paying for 64 H100s and getting meaningful work out of maybe 20 of them for 90% of each request’s lifetime. The finance team wanted to know why the GPU bill looked like we were training a foundation model when we were just serving one.

A Llama 70B model running inference on an H100 GPU hits 92% compute utilization during prefill. Thirty milliseconds later, during decode, that same GPU drops to 30%. The hardware hasn’t changed. The model weights are identical. The arithmetic intensity of the workload fell by 5x between one phase and the next.

That mismatch sits underneath every inference cost problem, and most serving architectures pretend it doesn’t exist.

Every time a large language model processes a request, it does two different things. First, it reads your entire prompt in parallel, filling a key-value cache with the attention state of every input token. That’s the prefill phase. Then it generates output tokens one at a time, reading from that cache on each step. That’s decode. Prefill is a matrix multiplication problem. Decode is a memory bandwidth problem. They have opposite hardware requirements, and the standard practice is to run both on the same pool of GPUs.

That standard practice is expensive. The GPU that’s perfectly sized for your prefill burst is massively overprovisioned for decode. The GPU that’s cost-efficient for decode can’t keep up with prefill. You pay for the worst case in both directions.

Disaggregated inference splits these two phases onto separate hardware pools, each sized for what it actually does. The idea surfaced in a 2024 OSDI paper called DistServe, out of UC San Diego’s Hao AI Lab. Eighteen months later, Perplexity runs it in production. Meta, LinkedIn, and Mistral serve traffic through it. NVIDIA built an entire framework (Dynamo) around it. vLLM, SGLang, and TensorRT-LLM all support it natively.

Here’s how it works, where the trade-offs are, and when you shouldn’t bother.

The two phases of inference are not the same workload

If you’ve run inference on any transformer model, you’ve already encountered both phases. But most monitoring dashboards flatten them into a single “inference” metric, which hides the problem.

Prefill processes all input tokens simultaneously. For a 4,096-token prompt, the attention computation involves large matrix multiplications across the full sequence length. This is compute-bound work. The GPU’s tensor cores are the bottleneck. On an H100 SXM, prefill achieves 200-400 arithmetic operations per byte of memory accessed. Utilization sits between 90% and 95%. The memory bandwidth, at 3.35 TB/s, is barely taxed.

Decode generates one token at a time. Each step reads the entire KV-cache from HBM to compute a single attention output. The tensor cores finish in microseconds and then wait for the next memory read. Arithmetic intensity drops to 60-80 ops/byte. GPU utilization falls to 20-40%. The tensor cores sit idle while the memory bus saturates.

These aren’t rough estimates. The InfoQ technical analysis from September 2025 measured prefill at 90-95% utilization and decode at 20-40%, with 3-4x better energy efficiency per operation during prefill. The SPAD paper from UT Austin simulated an H100 and found that reducing memory bandwidth by 40% only increased prefill latency by 17%, because prefill doesn’t use the bandwidth. Reducing compute capacity by 50% only increased decode latency by 22%, because decode doesn’t use the compute.

Two workloads. Opposite bottlenecks. Same GPU.

[Source: Author (SVG created using Inkscape)]

What monolithic serving actually costs you

In a standard vLLM or TensorRT-LLM deployment, a single GPU pool handles both phases. The scheduler interleaves prefill and decode requests within the same batch.

The immediate problem is interference. When a new prefill request enters the batch, active decode requests have to wait. Prefill is compute-heavy and takes longer per step. Those decode requests, which should be returning tokens at a steady cadence, experience a latency spike. This is the inter-token latency (ITL) jitter that production systems obsess over. A user watching a streaming response sees the text pause mid-sentence while the GPU processes someone else’s prompt.

The slower-burning problem is utilization waste. The GPU is provisioned to handle prefill peaks, so it’s overprovisioned for the decode phase that dominates the request lifecycle. A typical generation produces 200-500 output tokens. Each decode step takes 10-30ms. A 300-token response spends roughly 3-9 seconds in decode and maybe 200ms in prefill. The GPU runs decode for 90%+ of wall-clock time, and during that 90%, it’s using 30% of its compute capacity. You’re paying for an H100 and getting H100-level utilization for one-tenth of the request duration.

[Source: Author (SVG created using Inkscape)]

The vLLM community addressed part of this with chunked prefill, which breaks a long prefill into smaller pieces and interleaves them with decode steps. This smooths out the worst ITL spikes, but it doesn’t solve the utilization mismatch. The GPU is still doing both jobs.

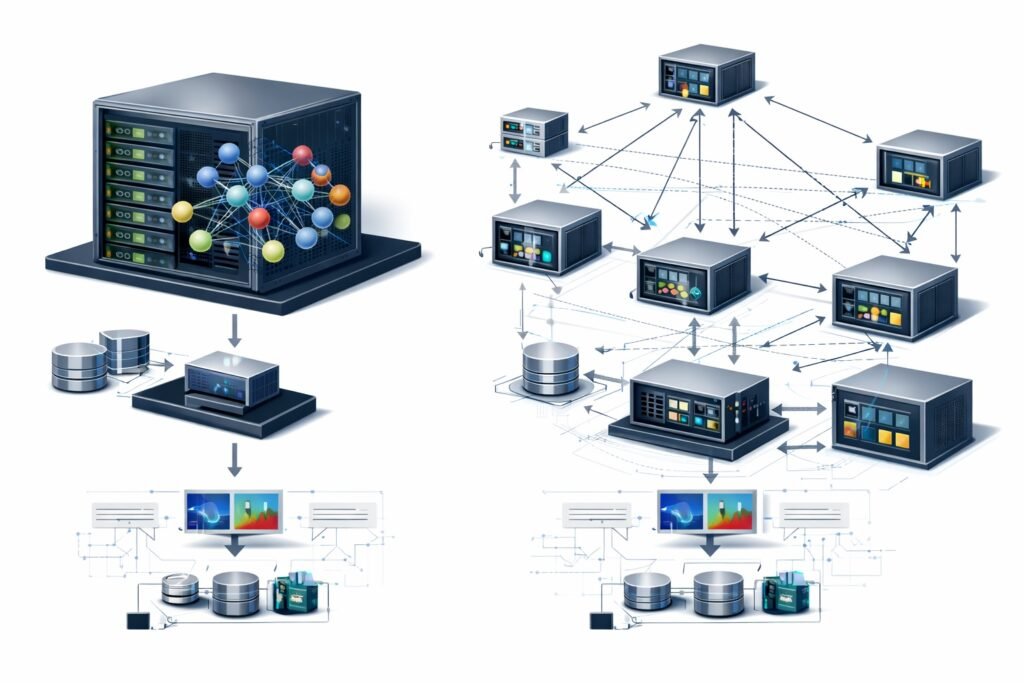

Splitting the inference path in two

Disaggregated inference runs prefill and decode on separate GPU pools connected by a fast network. A request arrives, gets routed to a prefill worker, which processes the full prompt and generates the KV-cache. That cache is then transferred over the network to a decode worker, which handles the autoregressive token generation.

Three pieces make it work.

1. A KV-aware router sits in front of both pools. It assigns incoming requests to available prefill workers and, once prefill completes, routes the KV-cache to a decode worker with available capacity. The router tracks which decode workers hold which KV-caches, enabling features like prefix caching across requests that share common prompt prefixes (system prompts, few-shot examples, tool descriptions).

2. A prefill pool contains GPUs optimized for high-throughput matrix multiplication. These workers process prompts, build KV-caches, and hand them off. They don’t generate output tokens. Because prefill is compute-bound, these GPUs can use less HBM bandwidth without penalty.

3. A decode pool contains GPUs optimized for memory bandwidth. These workers receive KV-caches and generate tokens autoregressively. Because decode is memory-bound, these GPUs can use less compute without penalty. With large batch sizes (hundreds of concurrent decode requests), decode utilization rises because the memory reads are amortized across more work.

[Source: Author (SVG created using Inkscape)]

The two pools scale independently. If your workload is prompt-heavy (long system prompts, agentic workflows with tool descriptions), you add prefill workers. If you’re serving many concurrent users generating long responses, you add decode workers. This decoupling is the primary economic benefit: you stop paying for compute capacity during decode, and you stop paying for memory bandwidth during prefill.

The tax you pay: moving the KV-cache

Disaggregation is not free. The KV-cache produced during prefill has to move from the prefill GPU to the decode GPU over the network, and these caches are not small.

For a 70B parameter model using grouped-query attention with 80 layers, 8 KV heads per layer, 128 dimensions per head, stored in FP16: each token’s KV state is 327,680 bytes. A 4,096-token prompt produces 1.34 GB of KV-cache. That entire block has to transfer before the decode worker can begin generating.

Here’s the calculation you can run for any model:

# KV-cache size calculation for any transformer model

def kv_cache_bytes(n_layers, n_kv_heads, head_dim, seq_len, dtype_bytes=2):

"""Returns KV-cache size in bytes for a single request."""

per_token = n_layers * n_kv_heads * head_dim * 2 * dtype_bytes # 2 for K and V

return per_token * seq_len

# Llama 70B with GQA

cache = kv_cache_bytes(n_layers=80, n_kv_heads=8, head_dim=128, seq_len=4096)

print(f"Per token: {80 * 8 * 128 * 2 * 2:,} bytes")

print(f"Full cache: {cache / 1e9:.2f} GB") # 1.34 GB

# Llama 8B (smaller model, same exercise)

cache_8b = kv_cache_bytes(n_layers=32, n_kv_heads=8, head_dim=128, seq_len=4096)

print(f"Llama 8B cache: {cache_8b / 1e9:.2f} GB") # 0.54 GB

[Source: Author (SVG created using Inkscape)]

At 100 Gbps (a standard RDMA link with EFA or ConnectX NICs), 1.34 GB takes roughly 107 milliseconds. At 400 Gbps, it takes 27ms. These numbers matter because they set the floor on time-to-first-token: the decode worker can’t start until the cache arrives.

Perplexity’s engineering team built their disaggregated serving stack on top of libfabric and RDMA, using cuMem for cross-process GPU memory sharing. Their KV messenger coordinates transfers layer-by-layer, so the decode worker can begin processing early layers while later layers are still in transit. This pipelining reduces the effective transfer latency below the raw bandwidth calculation.

The question is whether this transfer overhead is worth it. In a monolithic system, there’s no KV transfer, but there’s queuing delay. When a new prefill burst lands, active decode requests stall. Measurements from the DistServe paper show P99 latency improvements of 2x or more with disaggregation, because eliminating the prefill-decode interference removes the tail latency spikes that queuing causes. The 27ms transfer cost replaces 200-500ms of queuing delay.

There is a crossover point, though. For short prompts with high prefix cache hit rates, local prefill on the decode worker can be faster than transferring the cache over the network. The BentoML inference handbook reports 20-30% performance degradation when disaggregation is applied to workloads that don’t actually need it. This isn’t an architecture you apply blindly.

What the production stack looks like today

Eighteen months after DistServe, disaggregated inference has gone from a research prototype to the default serving architecture at multiple companies running LLMs at scale.

[Source: Author (SVG created using Inkscape)]

NVIDIA’s Dynamo, announced at GTC 2025, is a datacenter-scale orchestration layer that treats prefill and decode workers as first-class entities. It supports vLLM, SGLang, and TensorRT-LLM as inference backends, and includes a KV-aware router that handles cache placement and request scheduling across pools.

On the Kubernetes-native side, llm-d (an open-source project from Red Hat and IBM Research) implements disaggregated serving as a set of Kubernetes custom resources. Prefill and decode pools are separate deployments that autoscale independently based on queue depth and GPU utilization. KV-cache routing is handled through a gateway that tracks cache locations. For teams already running inference on EKS, GKE, or OpenShift, this maps cleanly onto existing cluster management workflows.

If you’re using vLLM directly, a minimal disaggregated setup looks like this:

# Start the prefill instance

python -m vllm.entrypoints.openai.api_server

--model meta-llama/Llama-3.1-70B-Instruct

--tensor-parallel-size 4

--kv-transfer-config '{

"kv_connector": "PyNcclConnector",

"kv_role": "kv_producer",

"kv_parallel_size": 2

}'

# Start the decode instance (separate node or GPU set)

python -m vllm.entrypoints.openai.api_server

--model meta-llama/Llama-3.1-70B-Instruct

--tensor-parallel-size 4

--kv-transfer-config '{

"kv_connector": "PyNcclConnector",

"kv_role": "kv_consumer",

"kv_parallel_size": 2

}'The kv_connector specifies the transfer protocol (NCCL in this case, RDMA via MooncakeConnector for higher throughput). The kv_role tells each instance whether it produces or consumes KV-caches. vLLM handles the routing and cache handoff between the two.

SGLang tested DeepSeek-R1 with disaggregated serving on 96 H100 GPUs: 3 nodes (24 GPUs) for prefill, 9 nodes (72 GPUs) for decode. They measured 52,300 input tokens/second and 22,300 output tokens/second per node. A follow-up on GB200 NVL72 showed 3.8x prefill and 4.8x decode throughput gains compared to the same workload on H100.

vLLM’s implementation (llm-d 0.3, October 2025) reached 2,200 tokens/second per H200 GPU with 32-way expert parallelism. Perplexity’s production system uses RDMA-based KV transfer with layer-pipelined transmission, supporting speculative decoding and structured output generation across the prefill-decode boundary.

When disaggregation makes things worse

Not every workload benefits. The BentoML inference handbook reports 20-30% performance degradation when disaggregation is applied to workloads that don’t need it, and that’s a real number measured on real hardware.

Short prompts hurt the most. If your median prompt is under 512 tokens and generation length stays below 100 tokens, the KV transfer overhead eats into whatever latency savings you’d get from phase separation. Local prefill on the decode worker takes a few milliseconds for a short prompt. Shipping the cache across RDMA takes longer than just computing it again. For chatbots handling quick Q&A, monolithic serving is simpler and faster.

Prefix cache reuse changes the equation too. Multi-turn conversations and agentic workflows tend to share large system prompts across requests. If 80%+ of the KV-cache already lives on the decode worker from a previous turn, the incremental prefill is tiny. Sending a fresh cache from a separate prefill worker wastes network bandwidth on data that’s already local.

Scale matters. A single node with 2-4 GPUs doesn’t generate enough concurrent requests to keep two separate pools busy. The scheduling overhead alone can tank throughput at that size. Disaggregation starts paying off when you have dozens of GPUs and enough traffic to keep both pools saturated.

And then there’s the network. Without RDMA or at least 100 Gbps links, KV transfer becomes the new bottleneck. TCP works but adds latency. Perplexity built their entire stack on RDMA with libfabric for a reason.

The Hao AI Lab put it bluntly in their November 2025 retrospective: “disaggregated prefill does not improve throughput.” The gains are latency control and cost efficiency through better utilization. If you’re meeting latency targets with monolithic serving, adding a KV transfer hop between phases just gives you more moving parts to debug at 2 AM.

The cost arithmetic

The economic case for disaggregation rests on one observation: you can use cheaper hardware for each phase when they’re separated.

Prefill workers need high FLOPS but don’t need massive HBM capacity. An H100 SXM with 80GB HBM is well-suited. An H200 with 141GB HBM is overkill for prefill; you’re paying for memory bandwidth you won’t use.

Decode workers need high memory bandwidth and large HBM to hold KV-caches for many concurrent requests, but don’t need peak FLOPS. An H200, with its larger HBM3e capacity and higher bandwidth, is actually better suited for decode than an H100. The extra memory lets you batch more decode requests together, amortizing the memory reads and pushing utilization higher.

The SPAD paper takes this logic to its extreme: they propose “right-sizing” GPU designs into separate prefill and decode chips. Their simulation shows that a prefill chip with 40% less memory bandwidth than an H100 only loses 17% of prefill performance. A decode chip with 50% less compute only loses 22% of decode performance. The cost savings from removing unused silicon in each chip are substantial.

At the cluster level, the InfoQ analysis reports 15-40% total infrastructure cost reduction from disaggregation. The savings come from not overprovisioning hardware, from eliminating idle tensor cores during decode, and from being able to add decode workers without buying prefill capacity you don’t need.

Meta, LinkedIn, Mistral, and HuggingFace are all running vLLM with disaggregated serving in production. The throughput gains reported range from 2x to 6.4x depending on the workload and hardware. SGLang’s DeepSeek-R1 deployment on GB200 measured 4.8x decode throughput improvement over monolithic H100 serving.

Should you disaggregate? A decision framework

Before you refactor your serving stack, run through these five checks. They take ten minutes and will save you from either over-engineering a simple deployment or under-investing in one that’s bleeding money.

1. Measure your prefill-to-decode time ratio. Run /status or equivalent monitoring on your current deployment and record what percentage of wall-clock GPU time is spent in each phase. If decode accounts for less than 70% of request duration, your workload is prefill-heavy and disaggregation has a smaller utilization payoff. If decode is 85%+ of wall time, you’re paying for idle tensor cores most of the day.

2. Calculate your KV-cache transfer size using the formula above. If it exceeds 500 MB per request and your network is under 100 Gbps, the transfer latency will eat into your TTFT budget. Run the numbers for your actual model and median prompt length, not for a theoretical worst case.

3. Check your prefix cache hit rate. If you’re running agentic or multi-turn workloads with shared system prompts, measure how often the decode worker already holds a usable KV-cache from a previous turn. Hit rates above 80% reduce the value of a separate prefill pool because most prefill work is already skipped.

4. Count your GPUs. Below ~16 GPUs, the scheduling overhead of maintaining two pools typically exceeds the utilization gain. Above 32 GPUs with sustained traffic, the cost savings from right-sized hardware start to compound.

5. Audit your network. Check whether your nodes have RDMA-capable NICs (EFA on AWS, ConnectX on bare metal) and what bandwidth they support. If you’re limited to TCP, disaggregation can still work for long-context workloads, but the transfer latency will be higher than the numbers in this article assume.

If checks 1, 4, and 5 all come back favorable, disaggregation will almost certainly reduce your per-token serving cost. Start with vLLM’s built-in disaggregated prefilling mode, measure the impact on TTFT and ITL, and scale from there.

What comes next

Most disaggregated deployments today still use the same GPU model for both pools. The SPAD research from UT Austin and NVIDIA’s own roadmap suggest that purpose-built prefill and decode silicon is coming. In the meantime, the practical version is using different cloud instance types: compute-optimized for prefill, memory-optimized for decode. Moonshot AI’s Mooncake architecture goes further, using CPU DRAM and SSDs as overflow tiers for KV-cache storage, keeping GPU HBM free for active decoding.

The Hao AI Lab called disaggregation “the defining principle of the modern inference stack” in their November 2025 retrospective. Eighteen months from research paper to production default at Perplexity, Meta, and NVIDIA is fast, even by ML infrastructure standards. The teams who plan for it now, with proper capacity modeling and network design, will spend less when traffic scales than the ones who adopt it reactively.