a distributed multi-agent system both in OpenClaw and AWS AgentCore for a while now. In my OpenClaw setup alone, it has a research agent, a writing agent, a simulation engine, a heartbeat scheduler, and several more. They collaborate asynchronously, hand off context through shared files, and maintain state across sessions spanning days or weeks.

When I bring in other agentic systems like Claude Code or the agents I have deployed in AgentCore, coordination, memory, and state all become more difficult to solve for.

Eventually, I came to a realization: most of what makes these agents actually work isn’t the model choice. It’s the memory architecture.

So when I came across “Memory for Autonomous LLM Agents: Mechanisms, Evaluation, and Emerging Frontiers” (arxiv 2603.07670), I was curious whether the formal taxonomy matched what I’d built by feel and iteration. It does, pretty closely. However, it codifies a lot of what I had found on my own and helped me see that some of my current pain points aren’t unique to me and are being seen more broadly.

Let’s walk through the survey and discuss its findings as I share my experiences.

Why Memory Matters More Than You Think

The paper leads with an empirical observation that should recalibrate your priorities if it hasn’t already:

“The gap between ‘has memory’ and ‘does not have memory’ is often larger than the gap between different LLM backbones.”

This is a huge claim. Swapping your underlying model matters less than whether your agent can remember things. I’ve felt this intuitively, but seeing it stated this plainly in a formal survey is useful. Practitioners spend enormous energy on model selection and prompt tuning while treating memory as an afterthought. That’s backward.

The paper frames agent memory inside a Partially Observable Markov Decision Process (POMDP) structure, where memory functions as the agent’s belief state over a partially observable world. That’s a tidy formalization. In practice, it means the agent can’t see everything, so it builds and maintains an internal model of what’s true. Memory is that model. Get it wrong, and every downstream decision degrades.

The Write-Manage-Read Loop

The paper characterizes agent memory as a write-manage-read loop, not just “store and retrieve.”

- Write: New information enters memory (observations, results, reflections)

- Manage: Memory is maintained, pruned, compressed, and consolidated

- Read: Relevant memory is retrieved and injected into the context

Most implementations I see nail “write” and “read” and completely neglect “manage.” They accumulate without curation. The result is noise, contradiction, and bloated context. Managing is the hard part, and it’s where most systems struggle or outright fail.

Before the most recent OpenClaw enhancements, I was handling this with a heuristic control policy: rules for what to store, what to summarize, when to escalate to long-term memory, and when to let things age out. It’s not elegant, but it forces me to be explicit about the management step rather than ignoring it.

In other systems I build, I often rely on mechanisms such as AgentCore Short/Long-term memory, Vector Databases, and Agent Memory systems. The file-based memory system doesn’t scale well for large, distributed systems (though for agents or chatbots, it’s not off the table).

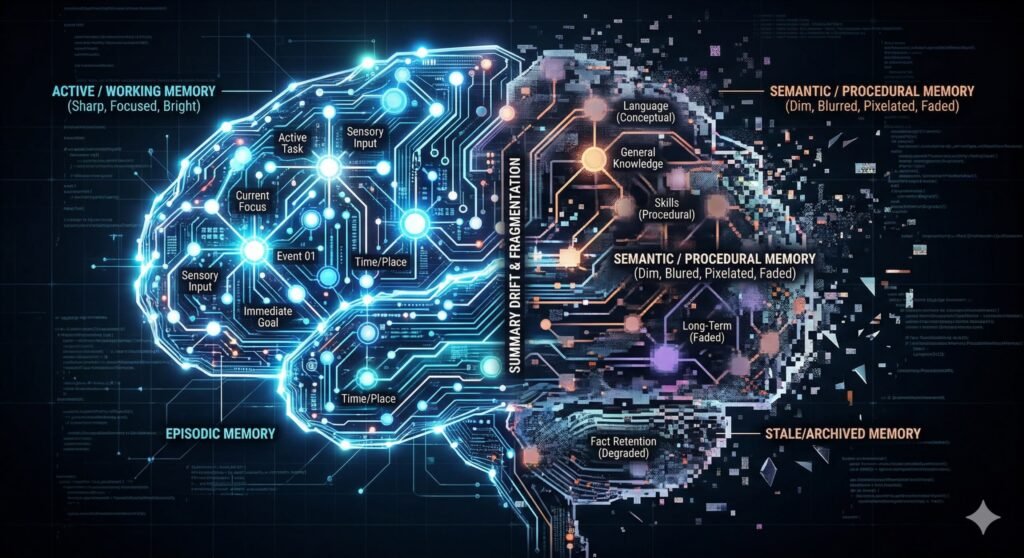

Four Temporal Scopes (And Where I See Them in Practice)

The paper breaks memory into four temporal scopes.

Working Memory

This is the context window.

It’s ephemeral, high-bandwidth, and limited. Everything lives here briefly. The failure mode is attentional dilution and the “lost in the middle” effect, where relevant content gets ignored because the window is too crowded. I’ve hit this, as have most of the teams I’ve worked with.

When OpenClaw, Claude Code, or your chatbot context gets long, agent behavior degrades in ways that are hard to debug because the model technically “has” the information but isn’t using it. The most common thing I see from teams (and myself) is to create new threads for different chunks of work. You don’t keep Claude Code open all day while working on 20+ different JIRA tasks; it degrades over time and performs poorly.

Episodic Memory

This captures concrete experiences; what happened, when, and in what sequence.

In my OpenClaw instance, this is the daily standup logs. Each agent writes a brief summary of what it did, what it found, and what it escalated. These accumulate as a searchable timeline. The practical value is enormous: agents can look back at yesterday’s work, spot patterns, and avoid repeating failures. Tools like Claude Code struggle, unless you set up instructions to force the behavior.

Production agents can leverage things like Agent Core’s short-term memory to keep these episodic memories. There are even mechanisms to understand what deserves to be persisted beyond a single interaction.

The paper validates this as a distinct and important tier.

Semantic Memory

Is responsible for abstracted, distilled knowledge, facts, heuristics, and learned conclusions.

In my OpenClaw, this is the MEMORY.md file in each agent’s workspace. It’s curated. Not everything goes in. The agent (or I, periodically) decides what’s worth preserving as a lasting truth versus what was situational.

In Agent Core Memory, this is primarily the Long-term memory feature.

This curation step is critical; without it, semantic memory becomes a junk drawer.

Procedural Memory

It is encoded executable skills, behavioral patterns, and learned behavior.

In OpenClaw, this maps mostly to the AGENTS.md and SOUL.md files, which contain persona instructions, behavioral constraints, and escalation rules. When the agent reads these at the start of the session, it’s loading procedural memory. These should be updated based on user feedback, or even through ‘dream’ processes that analyze interactions.

This is an area that I’ve been remiss in (as have teams that I’ve worked with). I spend time tuning a prompt, but the feedback mechanisms that drive the storage of procedural memory and the iteration on these personas often get left out.

The paper formalizes this as a distinct tier, which I found validating. These aren’t just system prompts. They’re a form of long-term learned behavior that shapes every action.

Five Mechanism Families

Now that we have some common definitions around the types of memories, let’s dive into memory mechanisms.

Context-Resident Compression

This covers sliding windows, rolling summaries, and hierarchical compression. These are the “stay in context” strategies. Rolling summaries are seductive because they feel clean (they’re not, I’ll get to why in a moment).

I’m sure everyone has run into Claude Code or Kiro CLI compressing a conversation when it gets too large for the context window. Oftentimes, you’re better off restarting a new thread.

Retrieval-Augmented Stores

This is RAG applied to agent interaction history rather than static documents. The agent embeds past observations and retrieves by similarity. This is powerful for long-running agents with deep history, but retrieval quality becomes a bottleneck fast. If your embeddings don’t capture semantic intent well, you’ll miss relevant memories and surface stale ones.

You also run into issues where questions like ‘what happened last Monday’ don’t retrieve quality memories.

Reflective Self-Improvement

This includes systems such as Reflexion and ExpeL, where agents write verbal post-mortems and store conclusions for future runs. The idea is compelling; agents learn from mistakes and improve. The failure mode is severe, though (we will cover it in more detail in a minute).

I believe other ‘dream’ based reflection and systems like the Google Memory Agent pattern belong to this class as well.

Hierarchical Virtual Context

A MemGPT’s OS-inspired architecture (see GitHub repo also). A main context window is “RAM”, a recall database is the “disk”, and archival storage is “cold storage”, while the agent manages its own paging. While this category is interesting, the overhead/work of maintaining these separate tiers is burdensome and tends to fail.

The MemGPT paper and git repo are both almost 3 years old, and I have yet to see any actual use in production.

Policy-Learned Management

This is a new frontier approach, where RL-trained operators (such as store, retrieve, update, summarize, and discard) that models learn to invoke optimally. I think there is a lot of promise here, but I haven’t seen real harnesses for builders to use or any actual production use.

Failure Modes

We’ve covered the types of memories and the systems that make them. Next is how these can fail.

Context-Resident Failures

Summarization drift occurs when you repeatedly compress history to fit it within a context window. Each compression/summarization throws away details, and eventually, you’re left with memory that doesn’t really match what happened. Again, you see this Claude Code and Kiro CLI when coding sessions cover too many features without creating new threads. One way I’ve seen teams combat this is to keep raw memories linked to the summarized/consolidated memories.

Attention dilution is the other failure mode in this category. Even if you can keep everything in context (as with the new 1 million-token windows), larger prompts “lose” information in the middle. While agents technically have all the memories, they can’t focus on the right parts at the right time.

Retrieval Failures

Semantic vs. causal mismatch occurs when similarity searches return memories that seem related but aren’t. Embeddings are great at determining when text ‘look like’ each other, but are terrible with knowing ‘this is the cause’. In practice, I often see this when debugging through coding assistants. They see similar errors but can miss the underlying cause, which often leads to thrashing/churning, a lot of changes, but never fixes the real issue.

Memory blindness occurs in tiered systems when important facts never resurface. The data exists, but the agent never sees it again. This can be because a sliding window has moved on, because you only retrieve 10 memories from a data source, but what you need would have been the 11th memory.

Silent orchestration failures are the most dangerous in this category. Paging, eviction, or archival policies do the wrong things, but no errors are thrown (or are lost in the noise by the autonomous system or by humans running it). The only symptom will be that responses get worse, get more generic, and get less grounded. While I’ve seen this arise in several ways, the most recent for me was when OpenClaw failed to write daily memory files, so daily stand-ups/summarizations had nothing to do. I only noticed because it kept forgetting things we worked on during those days.

Knowledge-Integrity Failures

Staleness is probably most common. The outside world changes, but your system memory doesn’t. Addresses, device states, user preferences, and anything that your system relies on to make decisions can drift over time. Long-lived agents will act on data from 2024 even in 2026 (who hasn’t seen an LLM insist the date is wrong, the wrong President is in office, or that the latest technology hasn’t actually hit the scene yet?).

Self-reinforcing errors (confirmation loops) occur when a system treats a memory as ground truth, but that memory is wrong. While you generally want systems to learn and build a new basis of truth, if a system creates a bad memory, its view of the world is affected. In my OpenClaw instance, it decided that my SmartThings integration with my Home Assistant was faulty; therefore, all information from a SmartThings device was deemed erroneous, and it ignored everything from it (in fact, there were just a few dead batteries in my system).

Over-generalization is a quieter version of self-reinforcement. Agents learn a lesson in a narrow context, then apply it everywhere. A workaround for a single customer or a single error is a default pattern.

Environmental Failure

Contradiction handling can be incredibly frustrating. As new information is collected, if it conflicts with existing information, systems can’t always determine the actual truth. In my OpenClaw system, I asked it to create some N8N workflows. They all created correctly, but the action timed out, so it thought it failed. I verified the workflows existed, told my OpenClaw agent to remember it, and it agreed. For the next several interactions, the agent oscillated between believing the workflow was available and believing it had failed to set up.

Design Tensions

There is going to be push-and-pull against all these for agents and memory systems.

Utility vs. Efficiency

Better memory usually means more tokens, more latency, more storage, more systems.

Utility vs. Adaptivity

Memory that is useful now will be stale at some point. Updating is expensive and risky.

Adaptivity vs. Faithfulness

The more you update, revise, and compress, the more you risk distorting what actually happened.

Faithfulness vs. Governance

Accurate memory may contain sensitive information (PHI, PII, etc) that you may be required to delete, obfuscate, or protect.

All of the above vs. Governance

Enterprises have complex compliance requirements that can conflict with all these.

Practical Takeaways for Builders

I’m often asked by engineering teams for the best memory system or where they should start their journey. Here’s what I say.

Start with explicit temporal scopes

Don’t build “memory”. When you need episodic memory, build it. When your use case grows and needs semantic memory, build it. Don’t try to find one system that does it all, and don’t build every form of memory before you need it.

Take the management step seriously

Plan how to maintain your memory. Don’t plan on accumulating indefinitely; figure out if you need compression or memory connection/dream behavior. How will you know what goes into semantic memory versus RAG memory? How do you handle updates? Without knowing these, you’ll accumulate noise, get contradictions, and your system will degrade.

Keep raw episodic records

Don’t just rely on summaries; they can drift or lose details. Raw records let you return to what actually happened and pull them in when necessary.

Version reflective memory

To help avoid contradictions in summaries, long-term memories, and compressions, add timestamps or versions to each. This can help your agents determine what is true and what is the most accurate reflection of the system.

Treat procedural memory as code

In OpenClaw, your Agents.MD, Memory.MD, personal files, and behavioral configs are all part of your memory architecture. Review them and keep them under source control so you can examine what changes and when. This is especially important if your autonomous system can alter these based on feedback.

Wrapup

The write-manage-read framing is the most useful takeaway from this paper. It’s simple, it’s complete, and it forces you to think about all three phases instead of just “store stuff, retrieve stuff.”

The taxonomy maps surprisingly well to what I built in OpenClaw through iteration and frustration. That’s either validating or humbling, depending on how you look at it (probably both.) The paper formalizes patterns that practitioners have been discovering independently, which is what a good survey should do.

The open problems section is honest about how much is unsolved. Evaluation is still primitive. Governance is mostly ignored in practice. Policy-learned management is promising but immature. There’s a lot of runway here.

Memory is where the real differentiation happens in agent systems. Not the model, not the prompts. The memory architecture. The paper gives you a vocabulary and a framework to think more clearly about it.

About

Nicholaus Lawson is a Solution Architect with a background in software engineering and AIML. He has worked across many verticals, including Industrial Automation, Health Care, Financial Services, and Software companies, from start-ups to large enterprises.

This article and any opinions expressed by Nicholaus are his own and not a reflection of his current, past, or future employers or any of his colleagues or affiliates.

Feel free to connect with Nicholaus via LinkedIn at https://www.linkedin.com/in/nicholaus-lawson/