That said, the results also show the limits of working with current AI models, largely because, unlike a human, they can’t really explain the process by which they’re making decisions. For example, some of the models made very different suggestions from each other, which the researchers say implies that they are exploring different regions of the space of possible sequences. But we don’t actually know whether that’s the case, or if each model had mathematical reasons for disliking the other’s suggestions.

That’s one of a number of cases in the paper where the researchers tried to reason backward about what the model was doing based on its output. In at least one case, the software redesigned the entire structural element (an alpha helix) the isoleucine it changed was located in, for reasons they don’t even hazard a guess.

It’s a good reminder that, at the moment, these software packages are tools: they let us do things that would otherwise not be possible, but they don’t actually help us understand all that much. We’re still left to reason through phenomena using the neural networks inside our skulls.

This doesn’t necessarily have to be the case; we could put more emphasis on exposing the inner workings of this software when developing it in order to get some insights into its decision-making process. But for now, I think the emphasis has been (quite reasonably) on getting something that works.

An amazing achievement, but is it useful?

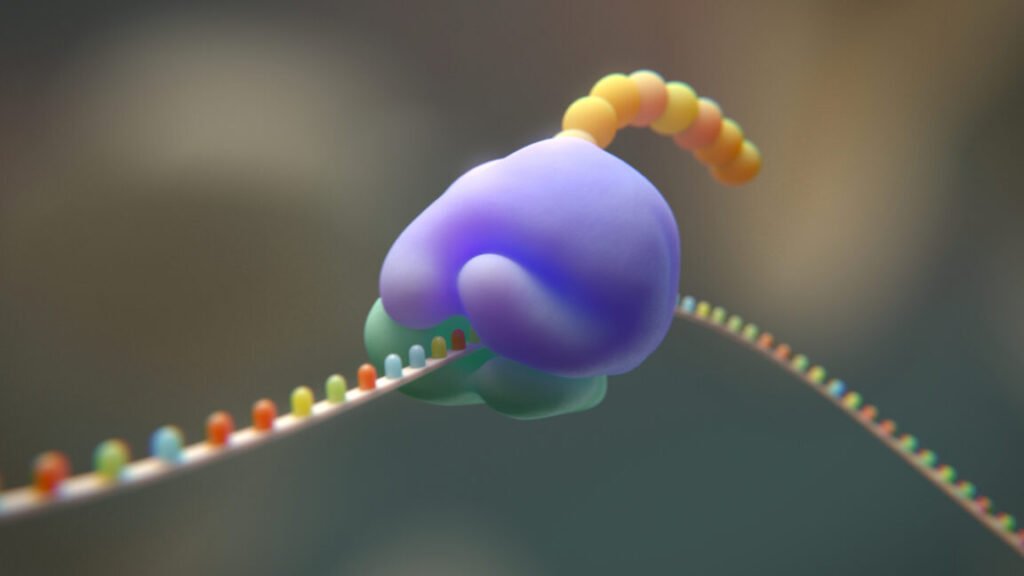

Overall, this is astonishing work. These proteins have to interact with each other, interact with ribosomal RNAs, transfer RNAs, messenger RNAs, the growing proteins the ribosome makes—plus all the normal proteins over on the large subunit. Each of those has had billions of years to evolve the ability to work with each other. The fact that we could make such radical changes to the system over the course of a couple of years is just mind-blowing.