Authors: Ahsaas Bajaj and Benjamin S Knight

? We ran 134,400 simulations grounded in real production ML models to find out. The answer depends on what you’re optimizing for, and on a single diagnostic you can compute before fitting a model.

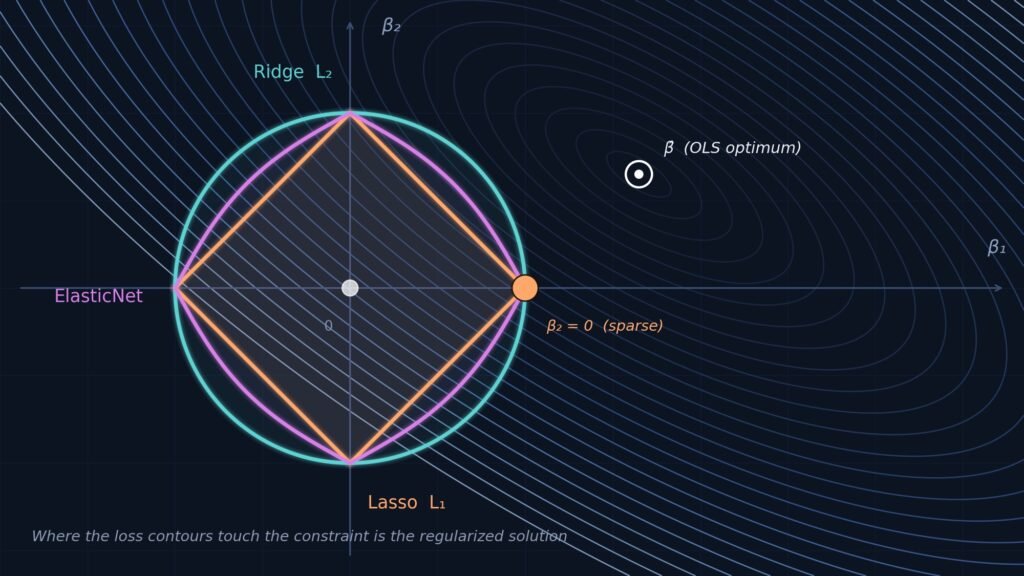

If you’ve ever trained a linear model in scikit-learn, you’ve faced this question: RidgeCV, LassoCV, or ElasticNetCV? Maybe you defaulted to whatever a tutorial recommended. Maybe a colleague had a strong opinion. Maybe you tried all three and picked whichever gave the best cross-validation score.

We wanted to replace intuition with empirical decision-making.

We ran 134,400 simulations across 960 configurations of a 7-dimensional parameter space, varying sample size, features, multicollinearity, signal-to-noise ratio, coefficient sparsity, and two more parameters. We benchmarked four regularization frameworks (Ridge, Lasso, ElasticNet, and Post-Lasso OLS) across the three objectives:

- Predictive accuracy (test RMSE)

- Variable selection (F1 score for recovering the true feature set)

- Coefficient estimation (L2 error vs. true coefficients)

Our simulation ranges aren’t arbitrary. They’re grounded in eight real-world production ML models from Instacart, spanning demand forecasting, conversion prediction, and inventory intelligence. The regimes we tested reflect conditions that MLEs actually encounter in practice.

This post distills the practical guidance from our study into a decision framework you can use on your next project. If you’re a Data Scientist or MLE choosing a regularizer, this is for you.

The Headlines

Before we get into the details:

- For prediction, it barely matters. Ridge, Lasso, and ElasticNet differ by at most 0.3% in median RMSE. No hyperparameter achieves even a small effect size for RMSE differences among them. This only holds with adequate training data (> 78 observations per feature).

- For variable selection, it matters enormously, especially under multicollinearity. Lasso’s recall collapses to 0.18 under high condition numbers with low signal, while ElasticNet maintains 0.93.

- At large sample-to-feature ratios (n/p ≥ 78), the methods become interchangeable. Use Ridge; it’s the fastest.

- Post-Lasso OLS should be avoided when optimizing for RMSE. It’s the only method that consistently underperforms, and it does so on every objective we measured.

What We Tested and Why

Our simulation framework varies seven hyper-parameters simultaneously:

We ran each of the 4 regularization frameworks against 960 hyper-parameter configurations, each using 35 random seeds for a total of 134,400 simulations. For every simulation we logged the test RMSE, F1 score (precision and recall for recovering the true support of β), and coefficient L2 error.

To measure what drives the differences between methods, we used omega-squared (ω²) from one-way ANOVA, an effect size that tells us what proportion of variance in performance gaps is explained by each parameter. This goes beyond asking “which method wins” to understanding why it wins, and under what conditions.

Here’s what this means in practice: most of the parameters that drive method differences are things you can observe before fitting a model. You know n and p. You can compute the condition number κ with numpy.linalg.cond(X). And the one important latent parameter, SNR, has a free diagnostic proxy: the regularization strength α that LassoCV selects. High α signals low signal; low α signals strong signal. We’ll come back to this.

Finding 1: For Prediction, Just Use Ridge

This is the most important finding for the largest number of practitioners.

Ridge, Lasso, and ElasticNet are nearly interchangeable for prediction. Across all 33,600 simulations per method, the median test RMSE differs by at most 0.3%. Our omega-squared analysis confirms this: no single hyperparameter achieves even a small effect size (ω² ≥ 0.01) for RMSE differences among these three methods. Every pairwise comparison is negligible (all < 0.02).

For practitioners who only care about accuracy, the near-equivalence is itself the finding. Regularizer choice matters far less than sample size.

So why Ridge? Computational efficiency. Ridge has a closed-form solution for each candidate α, making it dramatically faster than the alternatives (compare Ridge’s median run time of 6 seconds to Lasso’s median runtime of 9 seconds and ElasticNet’s median runtime of 48 seconds).

ElasticNet’s overhead stems from its joint grid search over α and the L1 ratio ρ. The 167–219× mean overhead we measured is specific to our 8-value L1 ratio grid. A coarser 3-value grid would reduce this proportionally. Even worse, when the coefficient distribution is approximately uniform, Lasso can take over an hour to converge (see the right-side of the bimodal distribution). This overhead buys you a median RMSE improvement of just 0.04% over Ridge, a margin that’s negligible in practice.

Caveats

At the smallest sample size we tested (n = 100), ElasticNet can beat Ridge by 5–15% in very specific instances: when SNR is high (~1.0). At low SNR, Ridge is actually marginally better. These are localized observations at the extreme of our simulation grid, not systematic trends.

One more note: LassoLars wasn’t part of our research design, but the LARS algorithm computes the entire Lasso regularization path analytically in a single pass (O(np²)), potentially matching Ridge’s closed-form speed advantage. However, LARS is known to be numerically unstable under high-collinearity conditions (κ > 10⁴) that characterize most production ML feature sets. This is precisely the regime where our strongest findings apply.

Bottom line for prediction: Default to RidgeCV. Sample size matters far more than regularizer choice. But prediction isn’t the only objective worth optimizing. When variable selection or coefficient accuracy matters, especially under multicollinearity, the story changes dramatically.

Finding 2: For Variable Selection, ElasticNet Is the Safe Default

Here method choice actually matters. Variable selection, the task of identifying which features truly contribute to the outcome, is the objective most sensitive to the regularizer, and where getting it wrong carries the steepest cost.

What Drives the Differences

From our ANOVA decomposition of pairwise F1 differences:

Sample size dominates overwhelmingly. But once you’re in the small-n regime (n/p < 78), the condition number and SNR become the primary differentiators.

High Multicollinearity (κ > ~10⁴): Do Not Use Lasso

This is one of the most robust findings in the entire study, and it’s directly relevant to production ML. Seven of eight models we surveyed operate in the high-κ regime. If your features are even moderately correlated (which they almost certainly are in any engineered feature set), this finding applies to you.

At high κ with low SNR:

- Lasso recall: 0.18 (it misses 82% of true features)

- ElasticNet recall: 0.93 (it catches 93% of true features)

That’s a 5× recall advantage for ElasticNet. The mechanism is well-known. When features are highly correlated, Lasso arbitrarily picks one from each correlated group and zeros the rest. ElasticNet’s L2 penalty component, the “grouping effect” described by Zou and Hastie (2005), keeps correlated features together.

Our simulations show this isn’t a corner case. The strongest F1 differences (ΔF1 of 0.50–0.75) concentrate squarely in the high-κ columns at n = 100 and n = 1,000. This is the common case in production.

Low Multicollinearity (κ < ~10²): Still Default to ElasticNet

You might expect Lasso to finally shine at low κ. It doesn’t, at least not universally. Even at low κ, Lasso’s recall is highly sensitive to the signal-to-noise ratio (see below).

ElasticNet maintains recall ≥ 0.91 regardless of SNR, even at low κ. Lasso is only competitive when both SNR is high and the true model is genuinely sparse. Since you typically don’t know SNR in advance, ElasticNet is the safer bet.

The Ridge Surprise

We didn’t expect this: Ridge frequently achieves the highest F1 scores at small n, despite never performing explicit variable selection. How? Ridge’s recall is always 1.0, because it retains every feature, and that perfect recall overwhelms the precision advantage of sparse methods when those methods’ recall collapses under low SNR.

But this isn’t genuine variable selection. Ridge gives you a nonzero coefficient for every feature. If you need an explicitly sparse model, Ridge doesn’t help. Combining Ridge with post-hoc permutation importance is a natural extension, but we didn’t evaluate it here.

Variable Selection: Summary

Bottom line for variable selection: ElasticNetCV is the safe default. Lasso only earns its place when κ is low, SNR is high, and you have domain reason to believe the true model is sparse.

Finding 3: For Coefficient Estimation, Branch on κ

When the goal is recovering accurate coefficient values, for interpretability or causal inference, the condition number κ becomes the key branching variable. Ideally we would branch on the distribution of the true 𝛽 coefficients, but we don’t get to observe it. In contrast, κ can be measured directly. At high κ ElasticNet dominates regardless of sparsity. At low κ, the optimal method depends on whether the true model is sparse or dense. Sample size changes the magnitude of differences but not their direction.

High κ (> ~10⁴): Use ElasticNet. It achieves 20–40% lower L2 coefficient error than Lasso, and holds a consistent edge over Ridge regardless of sparsity level.

Low κ (< ~10²): Branch on your domain knowledge about sparsity.

- Sparse domain (genomics, text classification, sensor arrays): Lasso or ElasticNet

- Dense domain (engineered feature sets, demand forecasting, conversion models): Ridge

All regimes: Avoid Post-Lasso OLS. It shows higher coefficient L2 error than standard Lasso across the entire simulation grid. The unpenalized OLS refit amplifies first-stage selection errors. This is the scenario where you’d hope the two-stage procedure helps, and it doesn’t.

Bottom line for coefficient estimation: ElasticNet at high κ, domain-dependent at low κ, never Post-Lasso OLS.

A Practitioner’s Decision Guide

All of the findings above distill into a decision framework that branches exclusively on quantities you can compute before fitting a single model: the sample-to-feature ratio n/p, the condition number κ (via numpy.linalg.cond(X)), and when finer discrimination is needed, the regularization strength α elected by a quick LassoCV run as a proxy for the latent SNR.

The full flowchart is available in our paper (Figure 7). Here, we walk through the logic as a decision tree.

The under-determined regime

If your feature count exceeds your sample size, you’re in the under-determined regime. Lasso’s α frequently saturates at the upper boundary of the search grid here, and its recall collapses. Default to Ridge or ElasticNet for all objectives, and proceed with caution.

The large-sample regime

If n/p ≥ 78, you’re in the large-sample regime where all methods converge. Performance gaps vanish across prediction, variable selection, and coefficient estimation simultaneously.

Use RidgeCV. It’s the fastest method by a wide margin, and there is no accuracy penalty. If you specifically need a sparse model for interpretability, ElasticNetCV or LassoCV are perfectly fine at this ratio. The choice among them is immaterial.

The regime where choice matters

Below n/p = 78 is where method choice matters most. The right regularizer depends on what you’re optimizing for.

If prediction is your priority: Use RidgeCV. The RMSE differences among the core three methods are too small to justify additional complexity or compute. One narrow exception: at n ≈ 100 with high SNR (~1.0), ElasticNet offers a detectable 5–15% edge regardless of κ; at n ≈ 100 with very low SNR, Ridge is marginally preferred. In either case, the margin is modest relative to the improvement available from increasing sample size.

If variable selection is your priority: Branch on the condition number.

- κ > ~10⁴ (high multicollinearity): Use ElasticNetCV. This is among the strongest recommendations in the study. One nuance: at moderate-to-high SNR (or n ≥ 1,000), ElasticNet is clearly preferred, with F1 advantages over Lasso reaching ΔF1 of +0.75. At very low SNR with n ≈ 100 (diagnosed by a saturated CV-elected α), Ridge achieves the highest F1, but only through perfect recall (retaining all features), not genuine variable selection. If you need an explicitly sparse model even in this corner, ElasticNet remains the least-bad option and still vastly outperforms Lasso.

- κ < ~10² (well-conditioned): An important caution first: do not default to Lasso even at low κ. Lasso’s recall drops sharply at lower SNR levels regardless of multicollinearity, while ElasticNet maintains recall ≥ 0.91 across all SNR levels. ElasticNet is the safe default here. To refine further, run a quick LassoCV and inspect the elected α. If α is high or saturated at the boundary, you’re in a low-SNR regime. Ridge provides the best F1 (though not through genuine sparsification). If α is moderate, stick with ElasticNet. If α is low and domain expertise suggests sparsity, Lasso becomes viable.

If coefficient estimation is your priority: Branch on the condition number.

- κ > ~10⁴: ElasticNetCV dominates regardless of sparsity.

- κ < ~10²: Use domain knowledge. Sparse model → Lasso. Dense model → Ridge.

The α Diagnostic: A Free SNR Proxy

The one latent parameter that matters for fine-grained decisions, signal-to-noise ratio, can be approximated at zero additional cost. When scikit-learn’s LassoCV fits your data, it reports the elected α. This value is inversely related to the underlying SNR: high α signals weak signal, low α signals strong signal.

Our simulations provide direct empirical confirmation: the highest elected α values (approaching 10⁴–10⁵) concentrate exclusively in small-n, low-SNR configurations.

These thresholds are approximate heuristics derived from our simulation grid, they’ll vary with feature scaling and dataset characteristics. Treat them as guidelines, not sharp cutoffs.

In All Uncertain Cases

When you’re unsure about SNR, unsure about sparsity, or operating in the intermediate-κ range we didn’t directly test: ElasticNet is the default that won’t burn you, and Post-Lasso OLS should be avoided.

The Meta-Finding: Sample Size Trumps Everything

One takeaway matters more than any method-level guidance: increasing your sample-to-feature ratio does more for every objective than any regularizer choice.

Sample size is the dominant driver of performance differences across all three metrics (ω² = 0.308 for F1, a large effect). The n × SNR interaction is the strongest two-way interaction across all comparisons (F = 569, p < 0.001). Signal-to-noise matters most precisely when samples are scarce. And at n/p ≥ 78, method choice becomes irrelevant entirely.

If you’re spending days tuning your regularizer when you could be growing your training set, you’re optimizing the wrong thing.

Quick Reference

Putting It Into Practice

The simulation framework is a reusable harness. We capped sample sizes at 100k observations for compute reasons, but the grid still spans the n/p inflection point where regularizer performance shifts. We’re extending it now to newer regularizers (Adaptive Lasso, SCAD, MCP) and intermediate κ levels.

To apply this framework to your next project, compute three quantities before you fit anything: the sample-to-feature ratio (n/p), the condition number (κ), and if you’re in the small-n regime, a quick LassoCV α as your SNR proxy. Route through the decision guide above based on your primary objective.

If n/p ≥ 78, use Ridge and spend your tuning budget elsewhere. If n/p < 78 and κ is high, use ElasticNet and don’t second-guess it. The only scenario where the choice requires real thought is low κ with small n, and even there, ElasticNet is never a bad answer.

The full paper, including all appendix figures, ANOVA tables, and the consolidated decision flowchart, is available on ArXiv.

Ahsaas Bajaj is a Machine Learning Tech Lead at Instacart. Benjamin S Knight is a Staff Data Scientist at Instacart.

All images were created by the authors.