I recently and immediately closed it.

Not because it was wrong. The code worked. The numbers checked out.

But I had no idea what was going on.

There were variables everywhere. df1, df2, final_df, final_final. Each step made sense in isolation, but as a whole it felt like I was tracing a maze. I had to read line by line just to understand what I had already done.

And the funny thing is, this is how most of us start with Pandas.

You learn a few operations. You filter here, create a column there, group and aggregate. It gets the job done. But over time, your code starts to feel harder to trust, harder to revisit, and definitely harder to share.

That was the point I realized something.

The gap between beginner and intermediate Pandas users is not about knowing more functions. It is about how you structure your transformations.

There is a pattern that quietly changes everything once you see it. Your code becomes easier to read. Easier to debug. Easier to build on.

It is called method chaining.

In this article, I will walk through how I started using method chaining properly, along with assign() and pipe(), and how it changed the way I write Pandas code. If you have ever felt like your notebooks are getting messy as they grow, this will probably click for you.

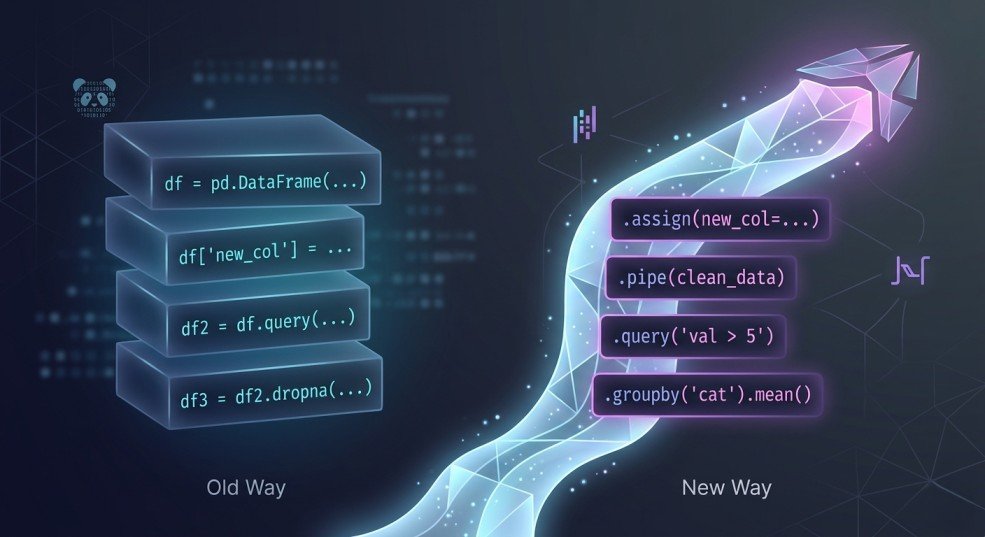

The Shift: What Intermediate Pandas Users Do Differently

At first, I thought getting better at Pandas meant learning more functions.

More tricks. More syntax. More ways to manipulate data.

But the more I built, the more I noticed something. The people who were actually good at Pandas were not necessarily using more functions than I was. Their code just looked… different.

Cleaner. More intentional. Easier to follow.

Instead of writing step by step code with lots of intermediate variables, they wrote transformations that flowed into each other. You could read their code from top to bottom and understand exactly what was happening to the data at each stage.

It almost felt like reading a story.

That is when it clicked for me. The real upgrade is not about what you use. It is about how you structure it.

Instead of thinking:

“What do I do next to this DataFrame?”

You start thinking:

“What transformation comes next?”

That small shift changes everything.

And this is where method chaining comes in.

Method chaining is not just a cleaner way to write Pandas. It is a different way to think about working with data. Each step takes your DataFrame, transforms it, and passes it along. No unnecessary variables. No jumping around.

Just a clear, readable flow from raw data to final result.

In the next section, I will show you exactly what this looks like using a real example.

The “Before”: How Most of Us Write Pandas

To make this concrete, let’s say we want to answer a simple question:

Which product categories are generating the most revenue each month?

I pulled a small sales dataset with order details, product categories, prices, and dates. Nothing fancy.

import pandas as pd

df = pd.read_csv("sales.csv")

print(df.head())Output

order_id customer_id product category quantity price order_date

0 1001 C001 Laptop Electronics 1 1200 2023-01-05

1 1002 C002 Headphones Electronics 2 150 2023-01-07

2 1003 C003 Sneakers Fashion 1 80 2023-01-10

3 1004 C001 T-Shirt Fashion 3 25 2023-01-12

4 1005 C004 Blender Home 1 60 2023-01-15Now, here is how I would have written this not too long ago:

# Create a new column for revenue

df["revenue"] = df["quantity"] * df["price"]

# Filter for orders from 2023 onwards

df_filtered = df[df["order_date"] >= "2023-01-01"]

# Convert order_date to datetime and extract month

df_filtered["month"] = pd.to_datetime(df_filtered["order_date"]).dt.to_period("M")

# Group by category and month, then sum revenue

grouped = df_filtered.groupby(["category", "month"])["revenue"].sum()

# Convert Series back to DataFrame

result = grouped.reset_index()

# Sort by revenue descending

result = result.sort_values(by="revenue", ascending=False)

print(result)This works. You get your answer.

category month revenue

1 Electronics 2023-02 2050

2 Electronics 2023-03 1590

0 Electronics 2023-01 1500

8 Home 2023-03 225

6 Home 2023-01 210

5 Fashion 2023-03 205

7 Home 2023-02 180

4 Fashion 2023-02 165

3 Fashion 2023-01 155But there are a few problems that start to show up as your analysis grows.

First, the flow is hard to follow. You have to keep track of df, df_filtered, grouped, and result. Each variable represents a slightly different state of the data.

Second, the logic is scattered. The transformation is happening step by step, but not in a way that feels connected. You are mentally stitching things together as you read.

Third, it is harder to reuse or test. If you want to tweak one part of the logic, you now have to trace where everything is being modified.

This is the kind of code that works fine today… but becomes painful when you come back to it a week later.

Now compare that to how the same logic looks when you start thinking in transformations instead of steps.

The “After”: When Everything Clicks

Now let’s solve the exact same problem again.

Same dataset. Same goal.

Which product categories are generating the most revenue each month?

Here’s what it looks like when you start thinking in transformations:

result = (

pd.read_csv("sales.csv") # Start with raw data

.assign(

# Create revenue column

revenue=lambda df: df["quantity"] * df["price"],

# Convert order_date to datetime

order_date=lambda df: pd.to_datetime(df["order_date"]),

# Extract month from order_date

month=lambda df: df["order_date"].dt.to_period("M")

)

# Filter for orders from 2023 onwards

.loc[lambda df: df["order_date"] >= "2023-01-01"]

# Group by category and month, then sum revenue

.groupby(["category", "month"], as_index=False)["revenue"]

.sum()

# Sort by revenue descending

.sort_values(by="revenue", ascending=False)

)

print(result)Same output. Completely different feel.

category month revenue

1 Electronics 2023-02 2050

2 Electronics 2023-03 1590

0 Electronics 2023-01 1500

8 Home 2023-03 225

6 Home 2023-01 210

5 Fashion 2023-03 205

7 Home 2023-02 180

4 Fashion 2023-02 165

3 Fashion 2023-01 155The first thing you notice is that everything flows. There is no jumping between variables or trying to remember what df_filtered or grouped meant.

Each step builds on the last one.

You start with the raw data, then:

- create revenue

- convert dates

- extract the month

- filter

- group

- aggregate

- sort

All in one continuous pipeline.

You can read it top to bottom and understand exactly what is happening to the data at each stage.

That is the part that surprised me the most.

It is not just shorter code. It is clearer code.

And once you get used to this, going back to the old way feels… uncomfortable.

There are a couple of things happening here that make this work so well.

We are not just chaining methods. We are using a few specific tools that make chaining actually practical.

In the next section, let’s break those down.

Breaking Down the Pattern

When I first saw this style of Pandas code, it looked a bit intimidating.

Everything was chained together. No intermediate variables. A lot happening in a small space.

But once I slowed down and broke it into pieces, it started to make sense.

There are really just three ideas carrying everything here:

- method chaining

assign()pipe()

Let’s go through them one by one.

Method Chaining (The Foundation)

At its core, method chaining is simple. Each step takes a DataFrame, applies a transformation, and returns a new DataFrame. That new DataFrame is immediately passed into the next step.

So instead of this:

df = step1(df)

df = step2(df)

df = step3(df)You do this:

df = step1(df).step2().step3()That is literally it.

But the impact is bigger than it looks.

It forces you to think in terms of flow. Each line becomes one transformation. You are no longer jumping around or storing temporary states. You are just moving forward.

That is why the code starts to feel more readable. You can follow the transformation from start to finish without holding multiple versions of the data in your head.

assign() — Keeping Everything in the Flow

This is the one that really unlocked chaining for me.

Before this, anytime I wanted to create a new column, I would break the flow:

df["revenue"] = df["quantity"] * df["price"]That works, but it interrupts the pipeline.

assign() lets you do the same thing without breaking the chain:

.assign(revenue=lambda df: df["quantity"] * df["price"])At first, the lambda df: part felt weird.

But the idea is simple. You are saying:

“Take the current DataFrame, and use it to define this new column.”

The key benefit is that everything stays in one place. You can see where the column is created and how it is used, all within the same flow.

It also encourages a cleaner style where transformations are grouped logically instead of scattered across the notebook.

pipe() — Where Things Start to Feel Powerful

pipe() is the one I ignored at first.

I thought, “I can already chain methods, why do I need this?”

Then I ran into a problem.

Some transformations are just too complex to fit neatly into a chain.

You either:

write messy inline logic

or break the chain completely

That is where pipe() comes in.

It allows you to pass your DataFrame into a custom function without breaking the flow.

For example:

def filter_high_value_orders(df):

return df[df["revenue"] > 500]

df = (

pd.read_csv("sales.csv")

.assign(revenue=lambda df: df["quantity"] * df["price"])

.pipe(filter_high_value_orders)

)Now your logic is cleaner, reusable and easier to test

This is the point where things started to feel different for me.

Instead of writing long scripts, I was starting to build small, reusable transformation steps.

And that is when it clicked.

This is not just about writing cleaner Pandas code. It is about writing code that scales as your analysis gets more complex.

In the next section, I want to show how this changes the way you think about working with data entirely.

Thinking in Pipelines (The Real Upgrade)

Up until this point, it might feel like we just made the code look nicer.

But something deeper is happening here.

When you start using method chaining consistently, the way you think about working with data begins to change.

Before, my approach was very step-by-step.

I would look at a DataFrame and think:

“What do I do next?”

- Filter it.

- Modify it.

- Store it.

- Move on.

Each step felt a bit disconnected from the last.

But with method chaining, that question changes.

Now it becomes:

“What transformation comes next?”

That shift is small, but it changes how you structure everything.

You stop thinking in terms of isolated steps and start thinking in terms of a flow. A pipeline. Data comes in, gets transformed stage by stage, and produces an output.

And the code reflects that.

Each line is not just doing something. It is part of a sequence. A clear progression from raw data to insight.

This also makes your code easier to reason about.

If something breaks, you do not have to scan the entire notebook. You can look at the pipeline and ask:

- which transformation might be wrong?

- where did the data change in an unexpected way?

It becomes easier to debug because the logic is linear and visible.

Another thing I noticed is that it naturally pushes you toward better habits.

- You start writing smaller transformations.

- You start naming things more clearly.

- You start thinking about reuse without even trying.

And that is where it starts to feel less like “just Pandas” and more like building actual data workflows.

At this point, you are not just analyzing data.

You are designing how data flows.

Real-World Refactor: From Messy to Clean

Let me show you how this actually plays out.

Instead of jumping straight from messy code to a perfect chain, I want to walk through how I would refactor this step by step. This is usually how it happens in real life anyway.

Step 1: The Starting Point (Messy but Works)

df = pd.read_csv("sales.csv") # Load dataset

# Create revenue column

df["revenue"] = df["quantity"] * df["price"]

# Filter orders from 2023 onwards

df_filtered = df[df["order_date"] >= "2023-01-01"]

# Convert order_date and extract month

df_filtered["month"] = pd.to_datetime(df_filtered["order_date"]).dt.to_period("M")

# Group by category and month, then sum revenue

grouped = df_filtered.groupby(["category", "month"])["revenue"].sum()

# Convert to DataFrame

result = grouped.reset_index()

# Sort results

result = result.sort_values(by="revenue", ascending=False)Nothing wrong here. This is how most of us start.

But we can already see:

- too many intermediate variables

- transformations are scattered

- harder to follow as it grows

Step 2: Reduce Unnecessary Variables

First, remove variables that are not really needed.

df = pd.read_csv("sales.csv") # Load dataset

# Create new columns upfront

df["revenue"] = df["quantity"] * df["price"]

df["month"] = pd.to_datetime(df["order_date"]).dt.to_period("M")

result = (

# Filter relevant rows

df[df["order_date"] >= "2023-01-01"]

# Aggregate revenue by category and month

.groupby(["category", "month"])["revenue"]

.sum()

# Convert to DataFrame

.reset_index()

# Sort results

.sort_values(by="revenue", ascending=False)

)Already better. There are fewer moving parts, and some flow is starting to appear

Step 3: Introduce Basic Chaining

Now we start chaining more deliberately.

result = (

pd.read_csv("sales.csv") # Start with raw data

.assign(

# Create revenue column

revenue=lambda df: df["quantity"] * df["price"],

# Extract month from order_date

month=lambda df: pd.to_datetime(df["order_date"]).dt.to_period("M")

)

# Filter for recent orders

.loc[lambda df: df["order_date"] >= "2023-01-01"]

# Group and aggregate

.groupby(["category", "month"])["revenue"]

.sum()

# Convert to DataFrame

.reset_index()

# Sort results

.sort_values(by="revenue", ascending=False)

)At this point, the flow is clear, transformations are grouped logically, and we are no longer jumping between variables.

Step 4: Clean It Up Further

Small tweaks make a big difference.

result = (

pd.read_csv("sales.csv") # Load data

.assign(

# Create revenue

revenue=lambda df: df["quantity"] * df["price"],

# Ensure order_date is datetime

order_date=lambda df: pd.to_datetime(df["order_date"]),

# Extract month from order_date

month=lambda df: df["order_date"].dt.to_period("M")

)

# Filter relevant time range

.loc[lambda df: df["order_date"] >= "2023-01-01"]

# Aggregate revenue

.groupby(["category", "month"], as_index=False)["revenue"]

.sum()

# Sort results

.sort_values(by="revenue", ascending=False)

)Now there are no redundant conversions, there’s cleaner grouping and more consistent structure.

Step 5: When pipe() Becomes Useful

Let’s say the logic grows. Maybe we only care about high-revenue rows.

Instead of stuffing that logic into the chain, we extract it:

def filter_high_revenue(df):

# Keep only rows where revenue is above threshold

return df[df["revenue"] > 500]Now we plug it into the pipeline:

result = (

pd.read_csv("sales.csv") # Load data

.assign(

# Create revenue

revenue=lambda df: df["quantity"] * df["price"],

# Convert and extract time features

order_date=lambda df: pd.to_datetime(df["order_date"]),

month=lambda df: df["order_date"].dt.to_period("M")

)

# Apply custom transformation

.pipe(filter_high_revenue)

# Filter by date

.loc[lambda df: df["order_date"] >= "2023-01-01"]

# Aggregate results

.groupby(["category", "month"], as_index=False)["revenue"]

.sum()

# Sort output

.sort_values(by="revenue", ascending=False)

)This is where it starts to feel different. Your code is no longer just a script. Now, it’s a sequence of reusable transformations.

What I like about this process is that you do not need to jump straight to the final version.

You can evolve your code gradually.

- Start messy.

- Reduce variables.

- Introduce chaining.

- Extract logic when needed.

That is how this pattern actually sticks.

Next, let’s talk about a few mistakes I made while learning this so you do not run into the same issues.

Common Mistakes (I Made Most of These)

When I started using method chaining, I definitely overdid it.

Everything felt cleaner, so I tried to force everything into a chain. That led to some… questionable code.

Here are a few mistakes I ran into so you do not have to.

1. Over-Chaining Everything

At some point, I thought longer chains = better code.

Not true.

# This gets hard to read very quickly

df = (

df

.assign(...)

.loc[...]

.groupby(...)

.agg(...)

.reset_index()

.rename(...)

.sort_values(...)

.query(...)

)Yes, it is technically clean. But now it is doing too much in one place.

Fix:

- Break your chain when it starts to feel dense.

- Group related transformations together

- Split logically different steps

- Think readability first, not cleverness.

2. Forcing Logic Into One Line

I used to cram complex logic into assign() or loc() just to keep the chain going.

That usually makes things worse.

.assign(

revenue_flag=lambda df: np.where(

(df["quantity"] * df["price"] > 500) & (df["category"] == "Electronics"),

"High",

"Low" ) )This works, but it is not very readable.

Fix:

If the logic is complex, extract it.

def add_revenue_flag(df):

df["revenue_flag"] = np.where(

(df["quantity"] * df["price"] > 500) & (df["category"] == "Electronics"),

"High",

"Low"

)

return df

df = df.pipe(add_revenue_flag)Cleaner. Easier to test. Easier to reuse.

3. Ignoring pipe() for Too Long

I avoided pipe() at first because it felt unnecessary. But without it, you hit a ceiling.

You either:

break your chain

or write messy inline logic

Fix:

- Use

pipe()as soon as your logic stops being simple. - It is what turns your code from a script into something modular.

4. Losing Readability With Poor Naming

When you start using custom functions with pipe(), naming matters a lot.

Bad:def transform(df): ...

Better:def filter_high_revenue(df): ...

Now your pipeline reads like a story:.pipe(filter_high_revenue)

That small change makes a big difference.

5. Thinking This Is About Shorter Code

This one took me a while to realize. Method chaining is not about writing fewer lines. It is about writing code that is easier to read, reason about and come back to later

Sometimes the chained version is longer. That is fine. If it is clearer, it is better.

Let’s wrap this up and tie it back to the “intermediate” idea.

Conclusion: Leveling Up Your Pandas Game

If you’ve followed along, you’ve seen a small shift with a big impact.

By thinking in transformations instead of steps, using method chaining, assign(), and pipe(), your code stops being just a collection of lines and becomes a clear, readable flow.

Here’s what changes when you internalize this pattern:

- You can read your code top to bottom without getting lost.

- You can reuse transformations easily, making your notebooks more modular.

- You can debug and test without tracing dozens of intermediate variables.

- You start thinking in pipelines, not just steps.

This is exactly what separates a beginner from an intermediate Pandas user.

You’re no longer just “making it work.” You’re designing your analysis in a way that scales, is maintainable, and looks good to anyone who reads it—even future you.

Try It Yourself

Pick a messy notebook you’ve been working on and refactor just one part using method chaining.

- Start with

assign()for new columns - Use

loc[]to filter - Introduce

pipe()for any custom logic

You’ll be surprised how much clearer your notebook becomes, almost immediately.

That’s it. You’ve just unlocked intermediate Pandas.

Your next step? Keep practicing, build your own pipelines, and notice how your thinking about data transforms along with your code.