people! If you have ever wanted to understand how linear regression works or just refresh the main ideas without jumping between lots of different sources – this article is for you. It is an extra long read that took me more than a year to write. It’s built around five key ideas:

- Visuals first. This is a comic-style article: reading the text helps, but it’s not required. A quick run through the images and animations can still give you a solid understanding of how things work. There are 100+ visuals in total;

- Animations where they might help (33 total). Computer science is best understood in motion, so I use animations to explain key ideas;

- Beginner-friendly. I kept the material as simple as possible, to make the article easy for beginners to follow.

- Reproducible. Most visuals were generated in Python, and the code is open source.

- Focus on practice. Each next step solves a problem that shows up in the previous step, so the whole article stays connected.

One more thing: the post is simplified on purpose, so some wording and examples may be a bit rough or not perfectly precise. Please don’t just take my word for it – think critically and double-check my points. For the most important parts, I provide links to the source code so you can verify everything yourself.

Table of contents

Who is this article for

Skip this paragraph, just scroll through the article for two minutes and look at the visuals. You’ll immediately know if you want to read it properly (the main ideas are shown in the plots and animations). This post is for beginners and for anyone working with data – and also for experienced people who want a quick refresh.

What this post covers

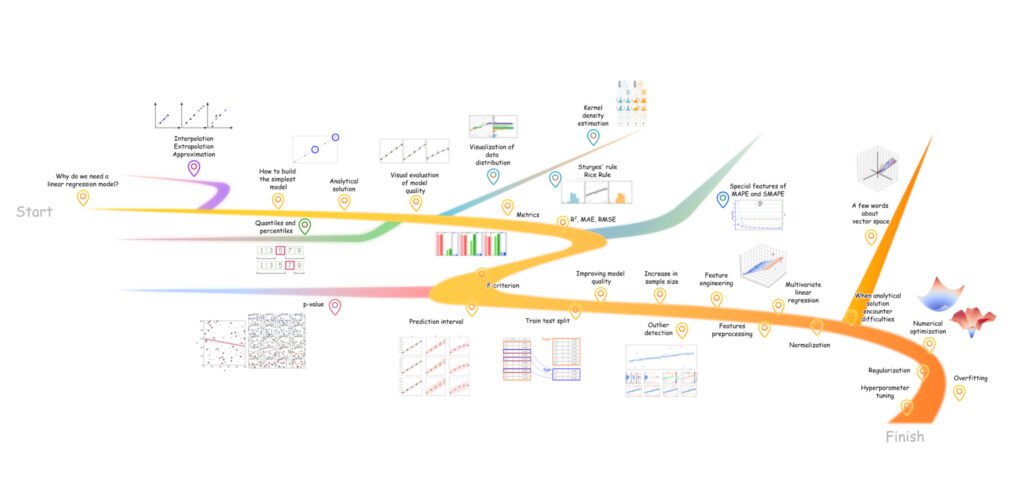

The article is structured in three acts:

- Linear regression: what it is, why we use it, and how to fit a model;

- How to evaluate the model’s performance;

- How to improve the model when the results are not good enough.

At a high level, this article covers:

- data-driven modeling;

- analytical solution for linear regression, and why it is not always practical;

- ways to evaluate model quality, both visually and with metrics;

- Multiple linear regression, where predictions are based on many features;

- the probabilistic side of linear regression, since predictions are not exact and it is important to quantify uncertainty;

- ways to improve model quality, from adding complexity to simplifying the model with regularization.

More specifically, it walks through:

- the least squares method for simple linear regression;

- regression metrics such as R², RMSE, MAE, MAPE, SMAPE, along with the Pearson correlation coefficient and the coefficient of determination, plus visual diagnostics like residual plots;

- maximum likelihood and prediction intervals;

- train/test splits, why they matter and how to do them;

- outlier handling methods, including RANSAC, Mahalanobis distance, Local Outlier Factor (LOF), and Cook’s distance;

- data preprocessing, including normalization, standardization, and categorical encoding;

- the linear algebra behind least squares, and how it extends to multivariate regression;

- numerical optimization methods, including gradient descent;

- L1 and L2 regularization for linear models;

- cross-validation and hyperparameter optimization.

Although this article focuses on linear regression, some parts – especially the section on model evaluation, apply to other regression algorithms as well. The same goes for the feature preprocessing chapters.

Since this is meant as an introductory, ML-related guide to linear regression, I’ll mostly avoid vector notation (where formulas use vectors instead of scalars). In other words, you will hardly see vectors and matrices in the equations, except in a few places where they are truly necessary. Keep in mind that most of the formulas shown here do have a vector form, and modern libraries implement the algorithms in exactly that way. Those implementations are efficient and reliable, so if you decide to code things up, don’t reinvent the wheel – use well-tested libraries or tools with UI when it makes sense.

All animations and images in the article are original and created by the author.

A brief literary review

This topic isn’t new, so there’s plenty of material out there. Below is a short list of direct predecessors, similar in platform (mostly Towards Data Science) and audience, meaning browser-first readers rather than textbook readers. The list is ordered by increasing subjective complexity:

- What is Linear Regression? – A beginner-friendly overview of what linear regression is, what the line represents, how predictions are made, with simple visuals and code;

- A Practical Guide to Linear Regression – Represents linear model fitting as machine learning pipeline: EDA, feature handling, model fitting, and evaluation on a real Kaggle dataset;

- Mastering the Basics: How Linear Regression Unlocks the Secrets of Complex Models – Easy to follow guide with step-by-step calculations memorable and nice visuals;

- Predict Housing Price using Linear Regression in Python – implementation-oriented article built around the Boston Housing dataset, with code examples for calculations from scratch;

- Multiple Linear Regression Analysis – An article with more mathematical detail, focused on multicollinearity;

- Mastering Linear Regression: The Definitive Guide For Aspiring Data Scientists – A long, all in one guide, theory plus Python;

- Linear Regression In Depth (Part 1) and Linear Regression In Depth (Part 2) – Deeper theory plus implementation articles that focuses on simple linear regression and sets up the transition to multiple regression;

And of course, do not ignore the classic papers if you want to read more about this topic. I’m not listing them as a separate bibliography in this section, but you’ll find links to them later in the text. Each reference appears right after the fragment it relates to, in square brackets, in the format: [Author(s). Title. Year. Link to the original source]

A good model starts with data

Let’s assume we have tabular data with two columns:

- Number of rooms in the apartment;

- The price of the apartment, $

By the time you build a model, there should already be data. Data collection and the initial preparation of the dataset are outside the scope of this article, especially since the process can vary a lot depending on the domain. The main principle to keep in mind is “garbage in, garbage out,” which applies to supervised machine learning in general. A good model starts with a good dataset.

Disclaimer regarding the dataset: The data used in this article is synthetic and was generated by the author. It is distributed under the same license as the source code – BSD 3-Clause.

Why do we need a model?

As the British statistician George Box once said, “All models are wrong, but some are useful.” Models are useful because they help us uncover patterns in data. Once those patterns are expressed as a mathematical relationship (a model), we can use it, for example, to generate predictions (Figure 2).

Modeling relationships in data is not a trivial task. It can be done using mathematical models of many different kinds – from simple ones to modern multi-stage approaches such as neural networks. For now, the key point is that a “model” can mean any kind of mapping from one set of data (feature columns) to a target column. I’ll use this definition throughout the article.

In linear regression, we model linear relationships between data variables. In pair (one-feature) regression – when there is one feature and one dependent variable – the equation has the form:

, where – feature, – target variable [James, G., et al. Linear Regression. An Introduction to Statistical Learning, 2021. Free version https://www.statlearning.com/].

So the expression is a linear regression model. And is one as well – the only difference is the coefficients. Since the coefficients are the key parameters of the equation, they have their own names:

- b0 – the intercept (also called the bias term)

- b1 – the slope coefficient

So, when we build a linear regression model, we make the following assumption:

Assumption 1. The relationship between the features (independent variables) and the response (dependent variable) is linear [Kim, Hae-Young. Statistical notes for clinical researchers: simple linear regression 1 – basic concepts, 2018. https://www.rde.ac/upload/pdf/rde-43-e21.pdf]

An example of a linear model with the intercept and slope coefficients already fitted (we will discuss why they are called that a bit later) is shown in Figure 4.

For the dataset shown in Figure 1, estimating the apartment price in dollars means multiplying the number of rooms by 10 000.

Important note: we are focusing on an approximation – so the model line does not have to pass through every data point, because real-world data almost never falls exactly on a single straight line. There is always some noise, and some factors the model does not see. It’s enough for the model line to stay as close to the observed data as possible. If you do not remember well the difference between approximation, interpolation and extrapolation, please check the image below.

Side branch 1. Difference between approximation, interpolation and extrapolation

How to build a simple model

We need to choose the coefficients and in the equation below so that the straight line fits the empirical observations (the real data) as closely as possible: , where – number of rooms, – apartment price, $.

Why this equation, and why two coefficients

Despite its apparent simplicity, the linear regression equation can represent many different linear relationships, as shown in Figure 5. For each dataset, a different line will be optimal.

Analytical solution

To find the optimal coefficient values, we will use an analytical solution: plug the empirical data from the previous section into a well-known formula derived long ago (by Carl Gauss and Adrien-Marie Legendre). The analytical solution can be written as four simple steps (Figure 6) [Hastie, T., et al. Linear Methods for Regression (Chapter 3 in The Elements of Statistical Learning: Data Mining, Inference, and Prediction). 2009. https://hastie.su.domains/ElemStatLearn].

Error is also part of the model

Earlier, I noted that linear regression is an approximation algorithm. This means we do not require the line to pass exactly through the observations. In other words, even at this stage we allow the model’s predictions to differ from the observed apartment prices. And it is important to emphasize: this kind of mismatch is completely normal. In the real world, it is very hard to find a process that generates data lying perfectly on a straight line (Figure 7).

So, the model needs one more component to be realistic: an error term. With real data, error analysis is essential – it helps spot problems and fix them early. Most importantly, it provides a way to quantify how good the model really is.

How to measure model quality

Model quality can be assessed using two main approaches:

- Visual evaluation

- Metric-based evaluation

Before we dive into each one, it is a good moment to define what we mean by “quality” here. In this article, we will consider a model a good one when the error term is as small as possible.

Using the original dataset (see Figure 1), different coefficient values can be plugged into the linear regression equation. Predictions are then generated for the known examples, and the difference between predicted and actual values is compared (Table 1). Among all combinations of the intercept and slope, one pair yields the smallest error.

| Number of rooms | Model (b0 + b1 x rooms number) | Prediction | Ground truth (observation) | Error (observation – predicted) |

| 2 | 20 000 | 20 000 | 0 | |

| 2 | 10 000 | 20 000 | 10 000 | |

| 2 | 2 500 | 20 000 | 17 500 |

The table example above is easy to follow because it is a small, toy setup. It only shows how different models predict the price of a two-room apartment, and in the original dataset each “number of rooms” value maps to a single price. Once the dataset gets larger, this kind of manual comparison becomes impractical. That’s why model quality is usually assessed with evaluation tools (visuals, metrics and statistical tests) rather than hand-made tables.

To make things a bit more realistic, the dataset will be expanded in three versions: one easy case and two that are harder to fit. The same evaluation will then be applied to these datasets.

Figure 8 is closer to real life: apartments vary, and even if the number of rooms are the same, the price across different properties doesn’t have to be identical.

Visual evaluation

Using the formula from the Analytic Solution section (Figure 6), the data can be plugged in to obtain the following models for each dataset:

- A: , where x is rooms number

- B: , where x is rooms number

- C: , where x is rooms number

A useful first plot to show here is the scatter plot: the feature values are placed on the x-axis, while the y-axis shows both the predicted values and the actual observations, in different colors. This kind of figure is straightforward to interpret – the closer the model line is to the real data, the better the model. It also makes the relationship between the variables easier to see, since the feature itself is shown on the plot [Piñeiro, G., et al. How to evaluate models: Observed vs. predicted or predicted vs. observed? 2008. https://doi.org/10.1016/j.ecolmodel.2008.05.006].

One downside of this plot is that it becomes hard to introduce additional features once you have more than one or two – for example, when price depends not only on the number of rooms, but also on the distance to the nearest metro station, the floor level, and so on. Another issue is scale: the target range can strongly shape the visual impression. Tiny differences on the chart, barely visible to the eye, may still correspond to errors of several thousand dollars. Price prediction is a great example here, because a misleading visual impression of model errors can translate directly into money.

When the number of features grows, visualizing the model directly (feature vs. target with a fitted line) quickly becomes messy. A cleaner alternative is an observed vs. predicted scatter plot. It’s built like this: the x-axis shows the actual values, and the y-axis shows the predicted values (Figure 10) [Moriasi, D. N., et al. Hydrologic and Water Quality Models: Performance Measures and Evaluation Criteria. 2015. pdf link]. I’ve also seen the axes swapped, with predicted values on the x-axis instead. Either way, the plot serves the same purpose – so feel free to choose whichever convention you prefer.

This plot is read as follows: the closer the points are to the diagonal line coming from the bottom-left corner, the better. If the model reproduced the observations perfectly, every point would sit exactly on that line without any deviation (dataset A looks pretty close to this ideal case).

When datasets are large, or the structure is uneven (for example, when there are outliers), Q-Q plots can be helpful. They show the same predicted and observed values on the same axes, but after a special transformation.

Q-Q plot Option 1, – order statistics. Predicted values are sorted in ascending order, and the same is done for the observed values. The two sorted arrays are then plotted against each other, just like in Figure 10.

Q-Q plot Option 2, – two-sample Q-Q plot. Here the plot uses quantiles rather than raw sorted values. The data are grouped into a finite number of levels (I usually use around 100). This plot is useful when the goal is to compare the overall pattern, not individual “prediction vs. observation” pairs. It helps to see the shape of the distributions, where the median sits, and how common very large or very small values are.

Side branch 2. Reminder about quantiles

According to Wikipedia, a quantile is a value that a given random variable does not exceed with a fixed probability.

Setting the probability wording aside for a moment, a quantile can be thought of as a value that splits a dataset into parts. For example, the 0.25 quantile is the number below which 25% of the sample lies. And the 0.9 quantile is the value below which 90% of the data lies.

For the sample [ 1 , 3 , 5 , 7 , 9 ] the 0.5 quantile (the median) is 5. There are only two values above 5 (7 and 9), and only two below it (1 and 3).

The 0.25 quantile is approximately 3, and the 0.75 quantile is approximately 7. See the explanation in the figure below.

The 25th percentile is also called the first quartile, the 50th percentile is the median or second quartile, and the 75th percentile is the third quartile.

In the second variant, no matter how large the dataset is, this plot always shows 99 points, so it scales well to large samples. In Figure 11, the real and predicted quantiles for dataset A lie close to the diagonal line which indicates a good model. For dataset B, the right tail of the distributions (upper-right corner) starts to diverge, meaning the model performs worse on high-priced apartments.

For dataset C:

- Below the 25th percentile, the predicted quantiles lie above the observed ones;

- Within the interquartile range (from the 25th to the 75th percentile), the predicted quantiles lie below the observed ones;

- Above the 75th percentile, the predicted tail again lies above the observed one.

Another widely used diagnostic is the residual plot. The x-axis shows the predicted values, and the y-axis shows the residuals. Residuals are the difference between the observed and predicted values. If you prefer, you can define the error with the opposite sign (predicted minus observed) and plot that instead. It doesn’t change the idea – only the direction of the values on the y-axis.

A residual plot is one of the most convenient tools for checking the key assumptions behind linear regression (Assumption 1 (linearity) was introduced earlier):

- Assumption 2. Normality of residuals. The residuals (observed minus predicted) should be approximately normally distributed. Intuitively, most residuals should be small and close to zero, while large residuals are rare. Residuals occur roughly equally often in the positive and negative direction.

- Assumption 3. Homoscedasticity (constant variance). The model should have errors of roughly the same magnitude across the full range: cheap apartments, mid-range ones, and expensive ones.

- Assumption 4. Independence. Observations (and their residuals) should be independent of each other – i.e., there should be no autocorrelation.

Figure 12 shows that dataset B violates Assumption 3: as the number of rooms increases, the errors get larger – the residuals fan out from left to right, indicating increasing variance. In other words, the error is not constant and depends on the feature value. This usually means the model is missing some underlying pattern, which makes its predictions less reliable in that region.

For dataset C, the residuals don’t look normal: the model sometimes systematically overestimates and sometimes systematically underestimates, so the residuals drift above and below zero in a structured way rather than hovering around it randomly. On top of that, the residual plot shows visible patterns, which can be a sign that the errors are not independent (to be fair, not always XD but either way it’s a signal that something is off with the model).

A nice companion to Figure 12 is a set of residual distribution plots (Figure 13). These make the shape of the residuals immediately visible: even without formal statistical tests, you can eyeball how symmetric the distribution is (a good sign is symmetry around zero) and how heavy its tails are. Ideally, the distribution should look bell-shaped, most residuals should be small, while large errors should be rare.

Side branch 3. A quick reminder about frequency distributions

If your stats course has faded from memory or you never took one this part is worth a closer look. This section introduces the most common ways to visualize samples in mathematical statistics. After it, interpreting the plots used later in the article should be straightforward.

Frequency distribution is an ordered representation showing how many times the values of a random variable fall within certain intervals.

To build one:

- Split the full range of values into k bins (class intervals)

- Count how many observations fall into each bin – this is the absolute frequency

- Divide the absolute frequency by the sample size n to get the relative frequency

In the figure below, the same steps are shown for the variable V:

The same kind of visualization can be built for variable U as well, but in this section the focus stays on V for simplicity. Later on, the histogram will be rotated sideways to make it easier to compare the raw data with the vertical layout commonly used for distribution plots.

From the algorithm description and from the figure above, one important drawback becomes clear: the number of bins k (and therefore the bin width) has a major impact on how the distribution looks.

There are empirical formulas that help choose a reasonable number of bins based on the sample size. Two common examples are Sturges’ rule and the Rice rule (see Extra Figure 5 below) [Sturges. The Choice of a Class Interval. 1926. DOI: 10.1080/01621459.1926.10502161], [Lane, David M., et. al. Histograms. https://onlinestatbook.com/2/graphing_distributions/histograms.html].

An alternative is to visualize the distribution using kernel density estimation (KDE). KDE is a smoothed version of a histogram: instead of rectangular bars, it uses a continuous curve built by summing many smooth “kernel” functions, usually, normal distributions (Extra Figure 6).

I understand that describing KDE as a sum of “tiny normal distributions” isn’t very intuitive. Here’s a better mental picture. Imagine that each data point is filled with a large number of tiny grains of sand. If you let the sand fall under gravity, it forms a little pile directly beneath that point. When several points are close to each other, their sand piles overlap and build a larger mound. Watch the animation below to see how it works:

In a KDE plot, those “sand piles” are typically modeled as small normal (Gaussian) distributions placed around each data point.

Another widely used way to summarize a distribution is a box plot. A box plot describes the distribution in terms of quartiles. It shows:

- The median (second quartile, Q2);

- The first (Q1) and third (Q3) quartiles (the 25th and 75th percentiles), which form the edges of the “box”;

- The whiskers, which mark the range of the data excluding outliers;

- Individual points, which represent outliers.

To sum up, the next step is to visualize samples of different sizes and shapes using all the methods discussed above. This will be done by drawing samples from different theoretical distributions: two sample sizes for each, 30 and 500 observations.

A frequency distribution is a key tool for describing and understanding the behavior of a random variable based on a sample. Visual methods like histograms, kernel density curves, and box plots complement each other and help build a clear picture of the distribution: its symmetry, where the mass is concentrated, how spread out it is, and whether it contains outliers.

Such point of view on the data is also useful because it has a natural probabilistic interpretation: the most likely values fall in the region where the probability density is highest, i.e., where the KDE curve reaches its peak.

As noted above, the residual distribution should look roughly normal. That’s why it makes sense to compare two distributions: theoretical normal vs. the residuals we actually observe. Two convenient tools for this are density plots and Q-Q plots with residual quantiles vs. normal quantiles. The parameters of the normal distribution are estimated from the residual sample. Since these plots work best with larger samples, for illustration I’ll artificially increase each residual set to 500 values while preserving the key behavior of the residuals for each dataset (Figure 14).

As Figure 14 shows, the residual distributions for datasets A* and B* are reasonably well approximated by a normal distribution. For B*, the tails drift a bit: large errors occur slightly more often than we would like. The bimodal case C* is much more striking: its residual distribution looks nothing like normal.

Heteroscedasticity in B* won’t show up in these plots, because they look at residuals on their own (one dimension) and ignore how the error changes across the range of predictions.

To sum up, a model is rarely perfect, it has errors. Error analysis with plots is a convenient way to diagnose the model:

- For pair regression, it is useful to plot predicted and observed values on the y-axis against the feature on the x-axis. This makes the relationship between the feature and the response easy to see;

- As an addition, plot observed values (x-axis) vs. predicted values (y-axis). The closer the points are to the diagonal line coming from the bottom-left corner, the better. This plot is also handy because it does not depend on how many features the model has;

- If the goal is to compare the full distributions of predictions and observations, rather than individual pairs, a Q-Q plot is a good choice;

- For very large samples, cognitive load can be reduced by grouping values into quantiles on the Q-Q plot, so the plot will have, for example, only 100 scatter points;

- A residual plot helps check whether the key linear regression assumptions hold for the current model (independence, normality of residuals, and homoscedasticity);

- For an easier comparison between the residual distribution and a theoretical normal distribution, use a Q-Q plot.

Metrics

Disclaimer regarding the designations X and Y

In the visualizations in this section, some notation may look a bit unusual compared to related literature. For example, predicted values are labeled , while the observed response is labeled . This is intentional: even though the discussion is tied to model evaluation, I don’t want it to feel like the same ideas only apply to the “prediction vs. observation” pair. In practice, and can be any two arrays – the right choice depends on the task.

There’s also a practical reason for choosing this pair: and are visually distinct. In plots and animations, they are easier to tell apart than pairs like and , or the more familiar and .

As compelling as visual diagnostics can be, model quality is best assessed together with metrics (numerical measures of performance). A good metric is appealing because it reduces cognitive load: instead of inspecting yet another set of plots, the evaluation collapses to a single number (Figure 15).

Unlike a residual plot, a metric is also a very convenient format for automated analysis, not just easy to interpret, but easy to plug into code. That makes metrics useful for numerical optimization, which we will get to a bit later.

This “Metrics” section also includes statistical tests: they help assess the significance of individual coefficients and of the model as a whole (we will cover that later as well).

Here is a non-exhaustive list:

- Coefficient of determination R2 – [Kvalseth, Tarald O. Cautionary Note about R². 1985. https://www.tandfonline.com/doi/abs/10.1080/00031305.1985.10479448];

- Bias;

- Mean absolute error – MAE;

- Root mean square error – RMSE;

- Mean absolute percentage error – MAPE;

- Symmetric mean absolute percentage error – SMAPE;

- The F-test for checking whether the model is significant as a whole;

- The t-test for checking the significance of the features and the target;

- Durbin-Watson test for analyzing residuals.

Figure 16 shows metrics computed by comparing the observed apartment prices with the predicted ones.

The metrics are grouped for readability. The first group, shown in red, includes the correlation coefficient (between predicted and observed values) and the coefficient of determination, R². Both are dimensionless, and values closer to 1 are better. Note that correlation is not limited to predictions versus the target. It can also be computed between a feature and the target, or pairwise between features when there are many of them.

The second group, shown in green, includes metrics that measure error in the same units as the response, which here means $. For all three metrics, the interpretation is the same: the closer the value is to zero, the better (Animation 2).

One interesting detail: in Figure 16 the bias is zero in all cases. Literally, this means the model errors are not shifted in either direction on average. A question for you: why is this generally true for a linear regression model fitted to any dataset (try changing the input values and playing with different datasets)?

Animation 2 and Figure 16 also show that as the gap between and grows, RMSE reacts more strongly to large errors than MAE. That happens because RMSE squares the errors.

The third group, shown in blue, includes error metrics measured in percentages. Lower values are better. MAPE is sensitive to errors when the true values are small, because the formula divides the prediction error by the observed value itself. When the actual value is small, even a modest absolute error turns into a large percentage and can strongly affect the final score (Figure 17).

Figure 17 shows that the difference measured in the original units, the absolute deviation between observed and predicted values, stays the same in both cases: it is 0 for the first pair, 8 for the second, and 47 for the third. For percentage-based metrics, the errors shrink for an obvious reason: the observed values become larger.

The change is larger for MAPE, because it normalizes each error by the observed value itself. sMAPE, in contrast, normalizes by the average magnitude of the observed and predicted values. This difference matters most when the observations are close to zero, and it fades as values move away from zero, which is exactly what the figure shows.

Side branch 4. Features of MAPE and SMAPE calculations

The details of metric calculations are important to discuss. Using MAPE and SMAPE (and briefly MAE) as examples, this section shows how differently metrics can behave across datasets. The main takeaway is simple: before starting any machine learning project, think carefully about which metric, or metrics, you should use to measure quality. Not every metric is a good fit for your specific task or data.

Here is a small experiment. Using the data from Figure 17, take the original arrays, observations [1,2,3] and predictions [1,10,50]. Shift both arrays away from zero by adding 10 to every value, repeated for 10 iterations. At each step, compute three metrics: MAPE, SMAPE, and MAE. The results are shown in the plot below:

As can be seen from the figure above, the larger the values included in the dataset, the smaller the difference between MAPE and SMAPE, and the smaller the errors measured in percentage terms. The alignment of MAPE and SMAPE is explained by the calculation features that allow eliminating the effect of MAPE asymmetry, which is particularly noticeable at small observation values. MAE remains unchanged, as expected.

Now the reason for the word “asymmetry” becomes clear. The simplest way to show it is with an example. Suppose the model predicts 110 when the true value is 100. In that case, MAPE is 10%. Now swap them: the true value is 110, but the prediction is 100. The absolute error is still 10, yet MAPE becomes 9.1%. MAPE is asymmetric because the same absolute deviation is treated differently depending on whether the prediction is above the true value or below it.

Another drawback of MAPE is that it cannot be computed when some target values are zero. A common workaround is to replace zeros with a very small number during evaluation, for example 0.000001. Still, it is clear that this can inflate MAPE.

Other metrics have their own quirks as well. For example, RMSE is more sensitive to large errors than MAE. This section is not meant to cover every such detail. The main point is simple: choose metrics thoughtfully. Use metrics recommended in your domain, and if there are no clear standards, start with the most common ones and experiment.

To summarize, the units of measurement for metrics and the ranges of possible values are compiled in Table 2.

| Metric | Units | Range | Meaning |

| Pearson correlation coefficient (predictions vs target) | Dimensionless | from -1 to 1 | The closer to 1, the better the model |

| Coefficient of determination R2 | Dimensionless | from −∞ to 1 | The closer to 1, the better the model |

| Bias | The same unit as the target variable | from −∞ to ∞ | The closer to 1, the better the model |

| Mean absolute error (MAE) | The same unit as the target variable | from 0 to ∞ | The closer to zero, the better the model |

| Root mean square error (RMSE) | The same unit as the target variable | from 0 to ∞ | The closer to zero, the better the model |

| Mean absolute percentage error (MAPE) | Percentage (%) | from 0 to ∞ | The closer to zero, the better the model |

| Symmetric mean absolute percentage error (SMAPE) | Percentage (%) | from 0 to 200 | The closer to zero, the better the model |

As mentioned earlier, this is not a complete list of metrics. Some tasks may require more specialized ones. If needed, quick reference info is always easy to get from your favorite LLM.

Here is a quick checkpoint. Model evaluation started with a table of predicted and observed values (Table 1). Large tables are hard to inspect, so the same information was made easier to digest with plots, moving to visual evaluation (Figures 9-14). The task was then simplified further: instead of relying on expert judgment from plots, metrics were computed (Figures 15-17 and Animations 1-3). There is still a catch. Even after getting one or several numbers, it is still up to us to decide whether the metric value is “good” or not. In Figure 15, a 5% threshold was used for MAPE. That heuristic cannot be applied to every linear regression task. Data varies, business goals are different, etc. For one dataset, a good model might mean an error below 7.5%. For another, the acceptable threshold might be 11.2%.

F test

That is why we now turn to statistics and formal hypothesis testing. A statistical test can, in principle, save us from having to decide where exactly to place the metric threshold, with one important caveat, and give us a binary answer: yes or no.

If you have never come across statistical tests before, it makes sense to start with a simplified definition. A statistical test is a way to check whether what we observe is just random variation or a real pattern. You can think of it as a black box that takes in data and, using a set of formulas, produces an answer: a few intermediate values, such as a test statistic and a p-value, and a final verdict (Figure 18) [Sureiman, Onchiri, et al. F-test of overall significance in regression analysis simplified. 2020. https://www.tandfonline.com/doi/full/10.1080/00031305.2016.1154108].

As Figure 18 shows, before running a test, we need to choose a threshold value. Yes, this is the right moment to come back to that caveat: here too, we have to deal with a threshold. But in this case it is much easier, because there are widely accepted standard values to choose from. This threshold is called the significance level. A value of 0.05 means that we accept a 5% chance of incorrectly rejecting the null hypothesis. In this case, the null hypothesis could be something like: the model is no better than a naive prediction based on the mean. We can vary this threshold. For example, some scientific fields use 0.01 or even 0.001, which is more strict, while others use 0.10, which is less strict.

If the practical meaning of the significance level is not fully clear at this point, that is completely fine. There is a more detailed explanation at the end of this section. For now, it is enough to fix one key point: the statistical tests discussed below have a parameter, , which we as researchers or engineers choose based on the task. In our case, it is set to 0.05.

So, a statistical test lets us take the data and a few chosen parameters, then compute test quantities that are used for comparison, for example, whether the test statistic is above or below a threshold. Based on that comparison, we decide whether the model is statistically significant. I would not recommend reinventing the wheel here. It is better to use statistical packages (it is reliable) to compute these tests, which is one reason why I am not giving the formulas in this section. As for what exactly to compare, the two common options are the F statistic against the critical F value, or the p-value against the significance level. Personally, mostly out of habit, I lean toward the second option.

We can use the F test to answer the question, “Is the model significant?” Since statistics is a mathematical discipline, let us first describe the two possible interpretations of the fitted model in a formal way. The statistical test will help us decide which of these hypotheses is more plausible.

We can formulate the null hypothesis (H₀) as follows: all coefficients for the independent variables, that is, the features, are equal to zero. The model does not explain the relationship between the features and the target variable any better than simply using the (target) mean value.

The alternative hypothesis (H₁) is then: at least one coefficient is not equal to zero. In that case, the model is significant because it explains some part of the variation in the target variable.

Now let us run the tests on our three datasets, A, B, and C (Figure 19).

As we can see from Figure 19, in all three cases the p-value is below 0.05, which is our chosen significance level. We use 0.05 because it is the standard default threshold, and in the case of apartment price prediction, choosing the wrong hypothesis is not as critical as it would be, for example, in a medical setting. So there is no strong reason to make the threshold more strict here. p-value is below 0.05 means we reject the null hypothesis, H₀, for models A, B, and C. After this check, we can say that all three models are statistically significant overall: at least one feature contributes to explaining variation in the target.

However, the example of dataset C shows that confirmation that the model is significantly better than the average price does not necessarily mean that the model is actually good. The F-statistic checks for minimum adequacy.

One limitation of this approach to model evaluation is that it is quite narrow in scope. The F test is a parametric test designed specifically for linear models, so unlike metrics such as MAPE or MAE, it cannot be applied to something like a random forest (another machine learning algorithm). Even for linear models, this statistical test also requires standard assumptions to be met (see Assumptions 2-4 above: independence of observations, normality of residuals, and homoscedasticity).

Still, if this topic interests you, there is plenty more to explore on your own. For example, you could look into the t test for individual features, where the hypothesis is tested separately for each model coefficient, or the Durbin-Watson test. Or you can choose any other statistical test to study further. Here we only covered the basic idea. P.S. It is especially worth paying attention to how the test statistics are calculated and to the mathematical intuition behind them.

Side branch 5. If you are not entirely clear about the significance level , please read this section

Every time I tried to understand what significance level meant, I ran into a brick wall. More complex examples involved calculations that I didn’t understand. Simpler sources conveyed the concept more clearly – “here’s an example where everything is intuitively understandable”:

- H₀ (null hypothesis): The patient does not have cancer;

- Type I error: The test says “cancer is present” when it is not actually;

- If the significance level is set at 0.05, in 5% of cases the test may mistakenly alarm a healthy person by informing them that they have cancer;

- Therefore, in medicine, a low (e.g., 0.01) is often chosen to minimize false alarms.

But here we have data and model coefficients – everything is fixed. We apply the F-test and get a p-value < 0.05. We can run this test 100 times, and the result will be the same, because the model is the same and the coefficients are the same. There we go – 100 times we get confirmation that the model is significant. And what is the 5 percent threshold here? Where does this “probability” come from?

Let us break this down together. Start with the phrase, “The model is significant at the 0.05 level”. Despite how it sounds, this phrase is not really about the model itself. It is really a statement about how convincing the observed relationship is in the data we used. In other words, imagine that we repeatedly collect data from the real world, fit a model, then collect a new sample and fit another one, and keep doing this many times. In some of those cases, we will still find a statistically significant relationship even if, in reality, no real relationship exists between the variables. The significance level helps us account for that.

To sum up, with a p-value threshold of 0.05, even if no real relationship exists, the test will still say “there is a relationship” in about 5 out of 100 cases, simply because of random variation in the data.

To make the text a bit less dense, let me illustrate this with an animation. We will generate 100 random points, then repeatedly draw datasets of 30 observations from that pool and fit a linear regression model to each one. We will repeat this sampling process 20 times. With a significance level of 5%, this means we allow for about 1 case out of 20 in which the F test says the model is significant even though, in reality, there is no relationship between the variables.

Indeed, in 1 out of 20 cases where there was actually no relationship between x and y, the test still produced a p-value below 0.05. If we had chosen a stricter significance level, for example 0.01, we would have avoided a Type I error, that is, a case where we reject H₀ (there is no relationship between x and y) and accept the alternative hypothesis even though H₀ is in fact true.

For comparison, we will now generate a population where a clear linear relationship is present and repeat the same experiment: 20 samples and the same 20 attempts to fit a linear regression model.

To wrap up this overview chapter on regression metrics and the F test, here are the main takeaways:

- Visual methods are not the only way to assess prediction error. We can also use metrics. Their main advantage is that they summarize model quality in a single number, which makes it easier to judge whether the model is good enough or not.

- Metrics are also used during model optimization, so it is important to understand their properties. For example:

- The metrics from the “green group” (RMSE, MAE, and bias) are convenient because they are expressed in the original units of the target.

- The root mean squared error (RMSE) reacts more strongly to large errors and outliers than the mean absolute error (MAE).

- The “blue group” (MAPE and SMAPE) is expressed in percent, which often makes these metrics convenient to discuss in a business context. At the same time, when the target values are close to zero, these metrics can become unstable and produce misleading estimates.

- Statistical tests provide an even more compact assessment of model quality, giving an answer in the form of “yes or no”. However, as we saw above, such a test only checks basic adequacy, where the main alternative to the fitted regression model is simply predicting the mean. It does not help in more complex cases, such as dataset C, where the relationship between the feature and the target is captured by the model well enough to rise above statistical noise, but not fully.

Later in the article, we will use different metrics throughout the visualizations, so that you get used to looking beyond just one favorite from the list 🙂

Forecast uncertainty. Prediction interval

An interesting combination of visual assessment and formal metrics is the prediction interval. A prediction interval is a range of values within which a new observation is expected to fall with a given probability. It helps show the uncertainty of the prediction by combining statistical measures with the clarity of a visual representation (Figure 20).

The main question here is how to choose these threshold values, . The most natural approach, and the one that is actually used in practice, is to extract information about uncertainty from the cases where the model already made errors during training, namely from the residuals. But to turn a raw set of differences into actual threshold values, we need to go one level deeper and look at linear regression as a probabilistic model.

Recall how point prediction works. We plug the feature values into the model, in the case of simple linear regression, just one feature, and compute the prediction. But a prediction is rarely exact. In most cases, there is a random error.

When we set up a linear regression model, we assume that small errors are more likely than large ones, and that errors in either direction are equally likely. These two assumptions lead to the probabilistic view of linear regression, where the model coefficients and the error distribution are treated as two parts of the same whole (Figure 21) [Fisher, R. A. On the Mathematical Foundations of Theoretical Statistics. 1922. https://doi.org/10.1098/rsta.1922.0009].

As Figure 21 shows, the variability of the model errors can be estimated by calculating the standard deviation of the errors, denoted by . We could also talk about the error variance here, since it is another suitable measure of variability. The standard deviation is simply the square root of the variance. The larger the standard deviation, the greater the uncertainty of the prediction (see Section 2 in Figure 21).

This leads us to the next step in the logic: the more widely the errors are spread, the less certain the model is, and the wider the prediction interval becomes. Overall, the width of the prediction interval depends on three main factors:

- Noise in the data: the more noise there is, the greater the uncertainty;

- Sample size: the more data the model has seen during training, the more reliably its coefficients are estimated, and the narrower the interval becomes;

- Distance from the center of the data: the farther the new feature value is from the mean, the higher the uncertainty.

In simplified form, the procedure for building a prediction interval looks like this:

- We fit the model (using the formula from the previous section, Figure 6)

- We compute the error component, that is, the residuals

- From the residuals, we estimate the typical size of the error

- Obtain the point prediction

- Next, we scale s using several adjustment factors: how much training data the model was fitted on, how far the feature value is from the center of the data, and the selected confidence level. The confidence level controls how likely the interval is to contain the value of interest. We choose it based on the task, in much the same way we earlier chose the significance level for statistical testing (common by default – 0.95).

As a simple example, we will generate a dataset of 30 observations with a “perfect” linear relationship between the feature and the target, fit a model, and compute the prediction interval. Then we will 1) add noise to the data, 2) increase the sample size, and 3) raise the confidence level from 90% to 95 and 99%, where the prediction interval reaches its maximum width (see Animation 4).

And consider separately what the prediction interval looks like for datasets A, B, and C (Figure 22).

Figure 22 clearly shows that even though models A and B have the same coefficients, their prediction intervals differ in width, with the interval for dataset B being much wider. In absolute terms, the widest prediction interval, as expected, is produced by the model fitted to dataset C.

Train test split and metrics

All of the quality assessments discussed so far focused on how the model behaves on the same observations it was trained on. In practice, however, we want to know whether the model will also perform well on new data it has not seen before.

That is why, in machine learning, it is common best practice to split the original dataset into parts. The model is fitted on one part, the training set, and its ability to generalize is evaluated on the other part, the test sample (Figure 23).

If we combine these model diagnostic methods into one large visualization, this is what we get:

Figure 24 shows that the metric values are worse on the test data, which is exactly what we would expect, since the model coefficients were optimized on the training set. A few more observations stand out:

- First, the bias metric has finally become informative: on the test data it is no longer zero, as it was on the training data, and now shifts in both directions, upward for datasets A and B, and downward for dataset C.

- Second, dataset complexity clearly matters here. Dataset A is the easiest case for a linear model, dataset B is more difficult, and dataset C is the most difficult. As we move from training to test data, the changes in the metrics become more noticeable. The residuals also become more spread out in the plots.

In this section, it is important to point out that the way we split the data into training and test sets can affect what our model looks like (Animation 5).

The choice of splitting strategy depends on the task and on the nature of the data. In some cases, the subsets should not be formed at random. Here are a few situations where that makes sense:

- Geographic or spatial dependence. When the data have a spatial component, for example temperature measurements, air pollution levels, or crop yields from different fields, nearby observations are often strongly correlated. In such cases, it makes sense to build the test set from geographically separated regions in order to avoid overestimating model performance.

- Scenario-based testing. In some business problems, it is important to evaluate in advance how the model will behave in certain critical or rare situations, for example at high or extreme feature values. Such cases can be intentionally included in the test set, even if they are absent or underrepresented in the training sample.

Imagine that there are only 45 apartments in the world…

To make the rest of the discussion easier to follow, let us introduce one important simplification for this article. Imagine that our hypothetical world, the one in which we build these models, is very small and contains only 45 apartments. In that case, all our earlier attempts to fit models on datasets A, B, and C were really just individual steps toward recovering that original relationship from all the available data.

From this point of view, A, B, and C are not really separate datasets, even though we can imagine them as data collected in three different cities, A, B, and C. Instead, they are parts of a larger population, D. Let us assume that we can combine these samples and work with them as a single whole (Figure 25).

It is important to keep in mind that everything we do, splitting the data into training and test sets, preprocessing the data, calculating metrics, running statistical tests, and everything else, serves one goal: to make sure the final model describes the full population well. The goal of statistics, and this is true for supervised machine learning as well, is to draw conclusions about the whole population using only a sample.

In other words, if we somehow built a model that predicted the prices of these 45 apartments perfectly, we would have a tool that always gives the correct answer, because in this hypothetical world there are no other data on which the model could fail. Again, everything here depends on that “if.” Now let me return us to reality and try to describe all the data with a single linear regression model (Figure 26).

In the real world, collecting data on every apartment is physically impossible, because it would take too much time, money, and effort, so we always work with only a subset. The same applies here: we collected samples and tried to estimate the relationship between the variables in a way that would bring us as close as possible to the relationship in population, entire dataset D.

One very important note: Later in the article, we will occasionally take advantage of the rules of our simplified world and peek at how the fitted model behaves on the full population. This will help us understand whether our modifications were successful, when the error metric goes down, or not, when the error metric goes up. At the same time, please keep in mind that this is not something we can do in the real world. In practice, it is impossible to evaluate a model on every single object!

Improving model quality

In the previous section, before we combined our data into one full population, we measured the model’s prediction error and found the results unsatisfying. In other words, we want to improve the model. Broadly speaking, there are three ways to do that: change the data, change the model, or change both. More specifically, the options are:

- Expanding the sample: increasing the number of observations in the dataset

- Reducing the sample: removing outliers and other undesirable rows from the data table

- Making the model more complex: adding new features, either directly observed or newly engineered

- Making the model simpler: reducing the number of features (sometimes this also improves the metrics)

- Tuning the model: searching for the best hyperparameters, meaning parameters that are not learned during training

We will go through these approaches one by one, starting with sample expansion. To illustrate the idea, we will run an experiment.

Expanding the sample

Keep in mind that the values from the full population are not directly available to us, and we can only access them in parts. In this experiment, we will randomly draw samples of 10 and 20 apartments. For each sample size, we will repeat the experiment 30 times. The metrics will be measured on 1) the training set, 2) the test set, and 3) the population, that is, all 45 observations. This should help us see whether larger samples lead to a more reliable model for the full population (Animation 6).

Increasing the sample size is a good idea if only because mathematical statistics tends to work better with larger numbers. As a result, the metrics become more stable, and the statistical tests become more reliable as well (Figure 27).

If boxplots are more familiar to you, take a look at Boxplot version of Figure 27.

Figure 27 in a form of Boxplot

Even though we worked here with very small samples, partly for visual convenience, Animation 6 and Figure 27 still let us draw a few conclusions that also hold for larger datasets. In particular:

- The average RMSE on the population is lower when the sample size is 20 rather than 10, specifically 4088 versus 4419. This means that a model fitted on more data has a lower error on the population (all available data).

- The metric estimates are more stable for larger samples. With 20 observations, the gap between RMSE on the training set, the test set, and the population is smaller.

As we can see, using larger samples, 20 observations rather than 10, led to better metric values on the population. The same principle applies in practice: after making changes to the data or to the model, always check the metrics. If the change improves the metric, keep it. If it makes the metric worse, roll it back. Rely on an engineering mindset, not on luck. Of course, in the real world we cannot measure metrics on the full population. But metrics on the training and test sets can still help us choose the right direction.

Reducing the sample by filtering outliers

Since this section is about pruning the sample, I will leave out the train-test split so the visualizations stay easier to read. Another reason is that linear models are highly sensitive to filtering when the sample is small, and here we are deliberately using small samples for clarity. So in this section, each model will be fitted on all observations in the sample.

We tried to collect more data for model fitting. But now imagine that we were unlucky: even with a sample of 20 observations, we still failed to obtain a model that looks close to the reference one (Figure 28).

Besides a sample that does not reflect the underlying relationship well, other factors can make the task even harder. Such distortions are quite common in real data for many reasons: measurement inaccuracies, technical errors during data storage or transfer, and simple human mistakes. In our case, imagine that some of the real estate agents we asked for data made mistakes when entering information manually from paper records: they typed 3 instead of 4, or added or removed zeros (Figure 29).

If we fit a model to this raw data, the result will be far from the reference model, and once again we will be unhappy with the modeling quality.

This time, we will try to solve the problem by removing a few observations that are much less similar to the rest, in other words, outliers. There are many methods for this, but most of them rely on the same basic idea: separating similar observations from unusual ones using some threshold (Figure 30) [Mandic-Rajcevic, et al. Methods for the Identification of Outliers and Their Influence on Exposure Assessment in Agricultural Pesticide Applicators: A Proposed Approach and Validation Using Biological Monitoring. 2019. https://doi.org/10.3390/toxics7030037]:

- Interquartile range (IQR), a nonparametric method

- Three-sigma rule, a parametric method, since it assumes a distribution, most often a normal one

- Z-score, a parametric method

- Modified Z-score (based on the median), a parametric method

Parametric methods rely on an assumption about the shape of the data distribution, most often a normal one. Nonparametric methods do not require such assumptions and work more flexibly, mainly using the ordering of values or quantiles. As a result, parametric methods can be more effective when their assumptions are correct, while nonparametric methods are usually more robust when the distribution is unknown.

In one-dimensional methods (Figure 30), the features are not used. Only one variable is considered, namely the target y. That is why, among other things, these methods clearly do not take the trend in the data into account. Another limitation is that they require a threshold to be chosen, whether it is 1.5 in the interquartile range rule, 3 in the three-sigma rule, or a cutoff value for the Z-score.

Another important note is that three of the four outlier filtering methods shown here rely on an assumption about the shape of the target distribution. If the data are normally distributed, or at least have a single mode and are not strongly asymmetric, then the three-sigma rule, the Z-score method, and the modified Z-score method will usually give reasonable results. But if the distribution has a less usual shape, points flagged as outliers may not actually be outliers. Since in Figure 30 the distribution is fairly close to a normal bell shape, these standard methods are appropriate in this case.

One more interesting detail is that the three-sigma rule is really a special case of the Z-score method with a threshold of 3.0. The only difference is that it is expressed in the original y scale rather than in standardized units, that is, in Z-score space. You can see this in the plot by comparing the lines from the three-sigma method with the lines from the Z-score method at a threshold of 2.0.

If we apply all of the filtering methods described above to our data, we obtain the following fitted models (Figure 31).

Looking at Figure 31, we can see that the worst model in terms of RMSE on the population is the one fitted on the data with outliers still included. The best RMSE is achieved by the model fitted on the data filtered using the Z-score method with a threshold of 1.5.

Figure 31 makes it fairly easy to compare how effective the different outlier filtering methods are. But that impression is misleading, because here we are checking the metrics against the full population D, which is not something we have access to in real model development.

So what should we do instead? Experiment. In some cases, the quickest and most practical option is to clean the test set and then measure the metric on it. In others, outlier removal can be treated as successful if the gap between the training and test errors becomes smaller. There is no single approach that works best in every case.

I suggest moving on to methods that use information from multiple variables. I will mention four of them, and we will look at the last two separately:

Each method shown in Figure 32 deserves a separate discussion, since they are already much more advanced than the one-dimensional approaches. Here, however, I will limit myself to the visualizations and avoid going too deep into the details. We will treat these methods from a practical point of view and look at how their use affects the coefficients and metrics of a linear regression model (Figure 33).

The methods shown in the visualizations above are not limited to linear regression. This kind of filtering can also be useful for other regression algorithms, and not only regression ones. That said, the most interesting methods to study separately are the ones that are specific to linear regression itself: leverage, Cook’s distance, and Random Sample Consensus (RANSAC).

Now let us look at leverage and Cook’s distance. Leverage is a quantity that shows how unusual an observation is along the x-axis, in other words, how far is from the center of the data. If it is far away, the observation has high leverage. A good metaphor here is a seesaw: the farther you sit from the center, the more influence you have on the motion. Cook’s distance measures how much a point can change the model if we remove it. It depends on both leverage and the residual.

In the example above, the calculations are performed iteratively for clarity. In practice, however, libraries such as scikit-learn implement this differently, so Cook’s distance can be computed without actually refitting the model n times.

One important note: a large Cook’s distance does not always mean the data are bad. It may point to an important cluster instead. Blindly removing such observations can hurt the model’s ability to generalize, so validation is always a good idea.

If you are looking for a more automated way to filter out values, that exists too. One good example is the RANSAC algorithm, which is a useful tool for automatic outlier removal (Animation 8). It works in six steps:

- Randomly select a subset of n observations.

- Fit a model to those n observations.

- Remove outliers, that is, exclude observations for which the model error exceeds a chosen threshold.

- Optional step: fit the model again on the remaining inliers and remove outliers one more time.

- Count the number of inliers, denoted by m.

- Repeat the first five steps several times, where we choose the number of iterations ourselves, and then select the model for which the number of inliers m is the largest.

The results of applying the RANSAC algorithm and the Cook’s distance method are shown in Figure 34.

Based on the results shown in Figure 34, the most promising model in this comparison is the one fitted with RANSAC.

To sum up, we tried to collect more data, and then filtered out what looked unusual. It is worth noting that outliers are not necessarily “bad” or “wrong” values. They are simply observations that differ from the rest, and removing them from the training set is not the same as correcting data errors. Even so, excluding extreme observations can make the model more stable on the larger share of more typical data.

For clarity, in the next part of the article we will continue working with the original unfiltered sample. That way, we will be able to see how the model behaves on outliers under different transformations. Still, we now know what to do when we want to remove them.

Making the model more complex: multiple linear regression

As an alternative, and also as a complement to the first two approaches (of model quality improvement), we can introduce new features to the model.

Feature engineering. Generating new features

A good place to start transforming the feature space is with one of the simplest approaches to implement: generating new features from the ones we already have. This makes it possible to avoid changes to the data collection pipelines, which in turn makes the solution faster and often cheaper to implement (in contrast to collecting new features from scratch). One of the most common transformations is the polynomial one, where features are multiplied by each other and raised to a power. Since our current dataset has only one feature, this will look as follows (Figure 36).

Note that the resulting equation is now a polynomial regression model, which makes it possible to capture nonlinear relationships in the data. The higher the polynomial degree, the more degrees of freedom the model has (Figure 37).

There are many different transformations that can be applied to the original data. However, once we use them, the model is no longer truly linear, which is already visible in the shape of the fitted curves in Figure 37. For that reason, I will not go into them in detail in this article. If this sparked your curiosity, you can read more about other feature transformations that can be applied to the original data. A good reference here is Trevor Hastie, Robert Tibshirani, Jerome Friedman – The Elements of Statistical Learning):

- Functional transformations

- Logarithms:

- Reciprocals:

- Roots:

- Exponentials:

- Trigonometric functions: especially when a feature has periodic behavior

- Sigmoid:

- Binarization and discretization

- Binning: split a feature X into intervals, for example,

- Quantile binning: split the data into groups with equal numbers of observations

- Threshold functions (hello, neural networks)

- Splines

- Wavelet and Fourier transforms

- and many others

Collecting new features

If generating new features does not improve the metric, we can move to a “heavier” approach: collect more data, but this time not new observations, as we did earlier, but new characteristics, that is, new columns.

Suppose we have a chance to collect several additional candidate features. In the case of apartment prices, the following would make sense to consider:

- Apartment area, in square meters

- Distance to the nearest metro station, in meters

- City

- Whether the apartment has air conditioning

The updated dataset would then look as follows:

A note on visualization

Looking back at Figure 1, and at most of the figures earlier in the article, it is easy to see that a two-dimensional plot is no longer enough to capture all the features. So it is time to switch to new visualizations and look at the data from a different angle (Figure 39 and Animation 9).

It is best to review the figure in detail (Figure 40).

Animation 9 highlights two noticeable patterns in the dataset:

- The closer an apartment is to the metro, the higher its price tends to be. Apartments near metro stations also tend to have a smaller area (Observation 2 in Figure 40)

- Air conditioning is a feature that clearly separates the target, that is, apartment price: apartments with air conditioning tend to be more expensive (Observation 6 in Figure 40).

As the figures and animation show, a good visualization can reveal important patterns in the dataset long before we start fitting a model or looking at residual plots.

Side branch 6. Thinking back to Figure 5, why did the price decrease after all?

Let us go back to one of the first figures (Figure 5 and Figure 7) in the article, the one used to explain the idea of describing data with a straight line. It showed an example with three observations where the price went down even though the number of rooms increased. But everything becomes clear once we visualize the data with an additional feature:

The reason for the price drop becomes much clearer here: even though the apartments were getting larger, they were also much farther from the metro station. Do not let the simplicity of this example fool you. It illustrates an important idea that is easy to lose sight of when working with truly large and complex data: we cannot see relationships between variables beyond the data we actually analyze. That is why conclusions should always be drawn with care. A new pattern may appear as soon as the dataset gains one more dimension.

As the number of features grows, it becomes harder to build pairwise visualizations like the ones shown in Figures 39 and 40. If your dataset contains many numerical features, a common choice is to use correlation matrices (Figure 41). I am sure you will come across them often if you continue exploring data science / data analysis domain.

The same principle applies here as it did when evaluating model quality: it is cognitively easier for an engineer to interpret numbers, one for each pair, than to inspect a large set of subplots. Figure 41 shows that price is positively correlated with the features number of rooms and area, and negatively correlated with distance to the metro. This makes sense: in general, the closer an apartment is to the metro or the larger it is, the more expensive it tends to be.

It is also worth noting why the correlation coefficient is so often visualized. It is always useful to check whether the dataset contains predictors that are strongly correlated with each other, a phenomenon known as multicollinearity. That is exactly what we see for the pair number of rooms and area, where the correlation coefficient is equal to one. In cases like this, it often makes sense to remove one of the features, because it adds little useful information to the model while still consuming resources, for example during data preparation and model optimization. Multicollinearity can also lead to other unpleasant consequences, but we will talk about it a bit later.

On the importance of preprocessing (categorical) features

As Figure 39 shows, the table now contains not only clean numerical values such as the number of rooms, but also less tidy distances to the metro, and even not straightforward values such as city names or text answers to questions like whether the apartment has a certain feature (e.g. air conditioning).

And while distance to the metro is not a problem, it is just another numerical feature like the ones we used in the model earlier, city names cannot be fed into the model directly. Just try assigning a coefficient to an expression like this: apartment price = X * New York. You could joke that some “apartments” really might cost, say, two New York, but that will not give you a useful model. That is why categorical features require special methods to convert them into numerical form

Starting with the simpler feature, air conditioning, since it takes only two values, yes or no. Features like this are usually encoded, that is, converted from text into numbers, using two values, for example (Figure 42):

Notice that Figure 42 does not show two separate models, each fitted to its own subset, but a single model. Here, the slope coefficient stays fixed, while the vertical shift of the fitted line differs depending on whether the binary feature is 0 or 1. This happens because when the feature is equal to 0, the corresponding term in the model becomes zero. This works well when the relationship between the features and the target is linear and follows the same direction for all observations. But a binary feature will not help much when the relationship is more complex and changes direction across the data (Figure 43).

As Figure 43 shows, in the worst case a model with a binary feature collapses to the same behavior as a model with just one numerical feature. To address this “problem,” we can borrow an idea from the previous section (feature generation) and generate a new interaction feature, or we can fit two separate models for different parts of the dataset (Figure 44).

Now that we have dealt with the binary feature, it makes sense to move on to the more complex case where a column contains more than two unique values. There are many ways to encode categorical values, and some of them are shown in Figure 45. I will not go through all of them here, though, because in my own experience one-hot encoding has been enough for practical applications. Just keep in mind that there are different encoding methods.

Estimating feature importance

Now that we know how to make the model more complex by adding new features, it makes sense to talk about how to combine the independent variables more thoughtfully. Of course, when the feature space grows, whether through feature generation or through collecting new data, practical limits quickly appear, such as “common sense” and model “training time”. But we can also rely on more effective heuristics to decide which features are actually worth keeping in the model. Starting with the simplest one and take a closer look at the coefficients of a multiple linear regression model (Figure 46).

As Figure 46 shows, a small problem appears here: differences in feature scale affect the estimated coefficients. Differences in scale also lead to other unpleasant effects, which become especially noticeable when numerical methods are used to find the optimal coefficients. That is why it is standard practice to bring features to a common scale through normalization.

Normalization and standardization (standard scaling) of features

Normalization is a data transformation that brings the values in the arrays to the same range (Figure 47).

Once the features are brought to the same scale, the size of the coefficients in a linear regression model becomes a convenient indicator of how strongly the model relies on each variable when making predictions.

The exact formulas used for normalization and standardization are shown in Figure 48.

From this point on, we will assume that all numerical features have been standardized. For the sake of clearer visualizations, we will apply the same transformation to the target as well, even though that is not compulsory. When needed, we can always convert the target back to its original scale.

Model coefficient and error landscape when the features are standardized

Once the original features have been standardized, meaning the coefficients , , and so on are now on a comparable scale, which makes them easier to vary, it becomes a good moment to look more closely at how their values affect model error. To measure error, we will use MAE and MAPE for simple linear regression, and RMSE for multiple linear regression.

As Animation 10 shows, there is a particular combination of coefficients at which the model error reaches its minimum. At the same time, changes in the intercept and the slope affect the error to a similar degree, the contour lines of the error surface on the left are almost circular.

For comparison, it is useful to look at how different metric landscapes can be. In the case of mean absolute percentage error, the picture changes noticeably. Because MAPE is sensitive to errors at small target values, here, “cheap apartments”, the minimum stretches into an elongated valley. As a result, many coefficient combinations produce similar MAPE values as long as the model fits the region of small y well, even if it makes noticeable errors for expensive apartments (Animation 11).

Next, we increase the number of features in the model, so instead of finding the optimal combination of two coefficients, we now need to find the best combination of three (Animations 12 and 13):

The animations above show that the features are strongly linearly related. For example, in Animation 12, the vs projection, the plane on the left in the lower-left panel, shows a clear linear pattern. This tells us two things. First, there is a strong negative correlation between the features number of rooms and distance to the metro. Second, even though the coefficients “move along the valley” of low RMSE values, the model predictions remain stable, and the error hardly changes. This also suggests that the features carry similar information. The same pattern appears in Animation 13, but there the linear relationship between the features is even stronger, and positive rather than negative.

I hope this short section with visualizations gave you a chance to catch your breath, because the next part will be harder to follow: from here on, linear algebra becomes unavoidable. Still, I promise it will include just as many visualizations and intuitive examples.

Extending the analytical solution to the multivariate case