that is dear to me (and to many others) because it has, in a way, watched me grow from an elementary school student, all the way to a (soon-to-be!) college graduate. An undeniable part of the game’s charm is its infinite replayability derived from its world generation. In current editions of the game, Minecraft uses a variety of noise functions in conjunction to procedurally generate [1] its worlds in the form of chunks, that is, blocks, in a way that tends to (more or less) form ‘natural’ looking terrain, providing much of the immersion for the game.

My goal with this project was to see if I could move beyond hard-coded noise and instead teach a model to ‘dream’ in voxels. By leveraging recent developments in Vector Quantized Variational Autoencoders (VQ-VAE) and Transformers, I built a pipeline to generate 3D world slices that capture the structural essence of the game’s landscapes. As a concrete output, I wanted the ability to generate chunks (arranged in a grid) that looked like Minecraft’s terrain.

As a side note, this isn’t an entirely novel idea, namely, ChunkGAN [2] provides an alternate approach to address the same goal.

The Challenge of 3D Generative Modeling

In a video [3] from January 2026, Computerphile featured Lewis Stuart that highlighted the main issues with 3D generation and I would encourage readers to give it a watch however, to summarize the key points, 3D generation is hard because good 3D datasets are hard to find or simply don’t exist and adding a dimension of freedom makes things much harder (consider the classic Three-body problem [4]). It should be noted that the video explicitly addresses diffusion models (which requires labelled data) though many of the concerns can be ported over to the general idea of 3D generation. Another issue is simply scale; a image ( pixels) would almost certainly be considered low-resolution by modern standards but a 3D model at the same fidelity would require voxels. More points immediately implies higher compute requirements and can quickly make such tasks infeasible.

To overcome the 3D data scarcity mentioned by Stuart, I turned to Minecraft, which, in my opinion, is the best source of voxel data available for terrain generation. By using a script to teleport through a pre-generated world, I forced the game engine to load and render thousands of unique chunks. Using a separate extraction script, I pulled these chunks directly from the game’s region files. This gave me a dataset with high semantic consistency; unlike a collection of random 3D objects, these chunks represent a continuous, flowing landscape where the ‘logic’ of the terrain (how a river bed dips or how a mountain peaks) is preserved over chunk boundaries.

To bridge the gap between the complexity of 3D voxels and the limitations of modern hardware, I could not simply feed raw chunks into a model and hope for the best. I needed a way to condense the ‘noise’ of millions of blocks into a meaningful, compressed language. This lead me to the heart of the project: a two-stage generative pipeline that first learns to ‘tokenize’ 3D space, and then learns to ‘speak’ it.

Data Preprocessing

A key yet non-obvious observation is that a significant portion of Minecraft’s chunks are full of ‘air’ blocks. It’s a non-trivial observation mostly because air isn’t technically a block, you can’t place it or remove it as you can with every other block in the game but rather, it’s the non-existence of a block at that point. In modern Minecraft, most of the vertical span is air and as such, instead of considering full height levels, I restricted it to . Those more familiar with Minecraft’s world generation would know that blocks have negative -values, all the way to and at this point, I must apologize because when I implemented this architecture, this had entirely slipped my mind. The model I present in this article would work just as well if you considered a larger vertical span but due to my unfortunate oversight, the results that I present will be from a restricted span of blocks.

On the note of restricting blocks, chunks have a lot of blocks that don’t show up very often and don’t contribute to the general shape of the terrain but necessary to maintain immersion for the player. At least for this project, I choose to restrict blocks to the top 30 blocks that made up chunks by frequency.

Pruning the vocabulary, so to speak, is useful but only half the battle. As stated before, because Minecraft worlds are primarily composed of ‘air’ and ‘stone,’ the dataset suffers from some pretty extreme class imbalance. To prevent the model from taking the ‘path of least resistance,’ that is, simply predicting empty space to achieve low loss, I implemented a Weighted Cross-Entropy loss. By scaling the loss based on the inverse log-frequency of each block, I forced the VQ-VAE to prioritize the structural ‘minorities’ like grass, water, and snow.

In plain terms: the rarer a block type is in the dataset, the more heavily the model is penalized for failing to predict it, pushing the network to treat a patch of snow or a river bed as just as important as the vast expanses of stone and air that dominate most chunks.

Architecture Overview

This mermaid sequenceDiagram [6] provides a bird’s eye view of the architecture.

Raw Voxel Problem and Tokenizing 3D Space

A naive approach to building such an architecture would involve learning and building chunks block by block. There’s a myriad of reasons why this would be unideal but the most important problem is that it can become computationally infeasible very quickly without really providing semantic structure. Imagine assembling a LEGO set with thousands of bricks. While possible, it would be way too slow and it wouldn’t really have any structural integrity: pieces that are adjacent horizontally would not be connected and you’d essentially be building a set of disjoint towers. The way LEGO addresses this is by having larger blocks, like the iconic brick, that take over space that would normally require multiple pieces. As such, you fill up space faster and there’s more structural integrity.

For the system, codewords are the LEGO bricks. Using a VQ-VAE (Vector Quantized Variational AutoEncoder), the goal is to build a codebook, that is, a set of structural signatures that it can use to reconstruct full chunks. Think of structures like a flat section of grass or a blob of diorite. In my implementation, I allowed a codebook with unique codes.

To implement this, I used 3D Convolutions. While 2D convolutions are the bread and butter of image processing, 3D convolutions allow the model to learn kernels that slide across the X, Y, and Z axes simultaneously. This is vital for Minecraft, where the relationship between a block and the one below it (gravity/support) is just as important as its relationship to the one beside it.

Further Details

The most critical component of this stage is the `VectorQuantizer`. This layer sits at the ‘bottleneck’ of the network, forcing continuous neural signals to snap to a fixed ‘vocabulary’ of 512 learned 3D shapes.

One of my biggest hurdles in VQ-VAE training is ‘dead’ embeddings, that is, codewords that the encoder never chooses, which effectively waste the model’s capacity. To solve this, I added a way to ‘reset’ dead codewords. If a codeword’s usage drops too low, the model forcefully re-initializes it by ‘stealing’ a vector from the current input batch:

Brick by Brick

A diverse assortment of blocks is great but they don’t mean much unless they are put together well. Therefore, to put these codewords to good use, I used a GPT. In order to make this work, I took the latent grid produced by the VQ-VAE into a set of tokens, essentially, the 3D world gets flattened into a 1D language. Then, the GPT sees 8 chunks worth of tokens to learn the spatial grammar, so to speak, of Minecraft to achieve the aforementioned semantic consistency.

To achieve this, I used Casual Self-Attention:

Finally, during inference, the model uses top-k sampling, along with some temperature to control erratic generation creativity in the following generation loop:

By the end of this sequence, the GPT has ‘written’ a structural blueprint 256 tokens long. The next step is to pass these through the VQ-VAE decoder to manifest a grid of recognizable Minecraft terrain.

Results

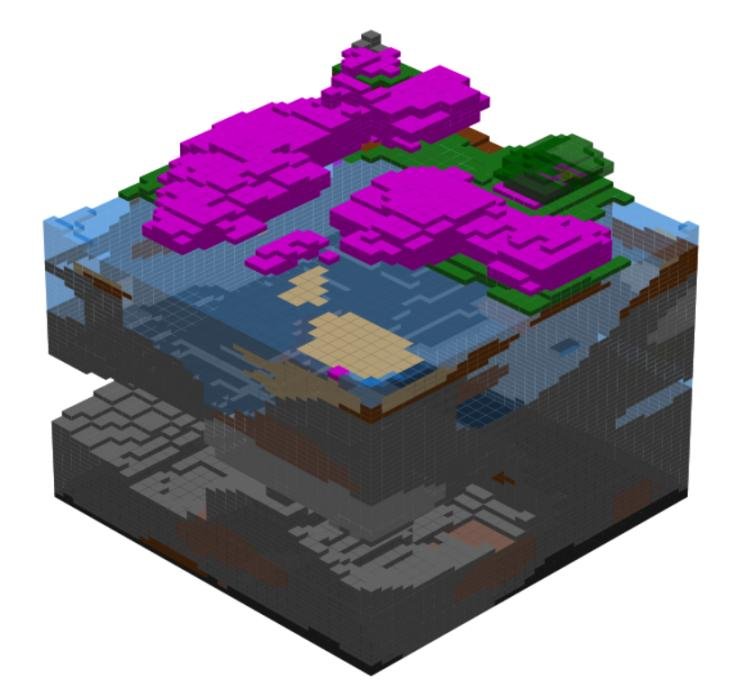

In this render [6], the model successfully clusters leaf blocks, mimicking the game’s tree structures.

In this one [6], the model uses snow blocks to cap the stone and grass, reflecting the high-altitude or tundra slices found in the training data. Additionally, this render shows that the model learned how to generate caves.

In this image [6], the model places water in a depression and borders it with sand, demonstrating that it has internalized the spatial logic of a coastline, rather than scattering water blocks arbitrarily across the surface.

Perhaps the most impressive result is the internal structure of the chunks. Because the implementation used 3D convolutions and a weighted loss function, the model actually generates subterranean features like contiguous caves, overhangs, and cliffs.

While the results are recognizable, they aren’t perfect clones of Minecraft. The VQ-VAE’s compression is ‘lossy,’ which sometimes results in a slight ‘blurring’ of block boundaries or the occasional floating block. However, for a model operating on a highly compressed latent space, the ability to maintain structural integrity across a chunk grid, I believe, is a significant success.

Reflections and Future Work

While the model successfully ‘dreams’ in voxels, there is significant room for expansion. Future iterations could revisit the full vertical span of to accommodate the massive jagged peaks and deep ‘cheese’ caves characteristic of modern Minecraft versions. Furthermore, scaling the codebook beyond 512 entries would allow the system to tokenize more complex, niche structures like villages or desert temples. Perhaps most exciting is the potential for conditional generation, or ‘biomerizing’ the GPT, which would enable users to guide the architectural process with specific prompts such as ‘Mountain’ or ‘Ocean,’ turning a random dream into a directed creative tool.

Thanks for reading! If you’re interested in the full implementation or want to experiment with the weights yourself, feel free to check out the repository [5].

Citations and Links

[1] Minecraft Wiki Editors, World generation (2026), https://minecraft.wiki/w/World_generation

[2] x3voo, ChunkGAN (2024), https://github.com/x3voo/ChunkGAN

[3] Lewis Stuart for Computerphile, Generating 3D Models with Diffusion – Computerphile (2026), https://www.youtube.com/watch?v=C1E500opYHA

[4] Wikipedia Editors, Three-body Problem (2026), https://en.wikipedia.org/wiki/Three-body_problem

[5] spaceybread, glowing-robot (2026), https://github.com/spaceybread/glowing-robot/tree/master

[6] Image by author.

A Note on the Dataset

All training data was generated by the author using a locally run instance of Minecraft Java Edition. Chunks were extracted from procedurally generated world files using a custom extraction script. No third-party datasets were used. As the data was generated and extracted by the author from their own game instance, no external licensing restrictions apply to its use in this research context.