So the new chips allow for faster training, but Google also says you get more useful computation for every volt you pump into a TPU 8t. The company claims a “goodpute” rate of 97 percent, which means less waiting and wasted effort. With better handling of irregular memory access, automatic handling of hardware faults, and real-time telemetry across all connected chips, TPU 8t spends more time actively advancing model training.

When training is done, AI models run in inference mode to generate tokens—that’s the process happening behind the scenes when you tell a model to do something. This doesn’t require as much horsepower, so using the same hardware for both parts of the AI lifecycle is inefficient. That’s why inference is the purview of TPU 8i, which is designed to be more efficient when running multiple specialized agents, with less waiting time. TPU 8i chips also run in larger pods of 1,152 chips versus just 256 for the last-gen Ironwood inference clusters. That works out to 11.6 EFlops per pod, much lower than TPU 8t pods.

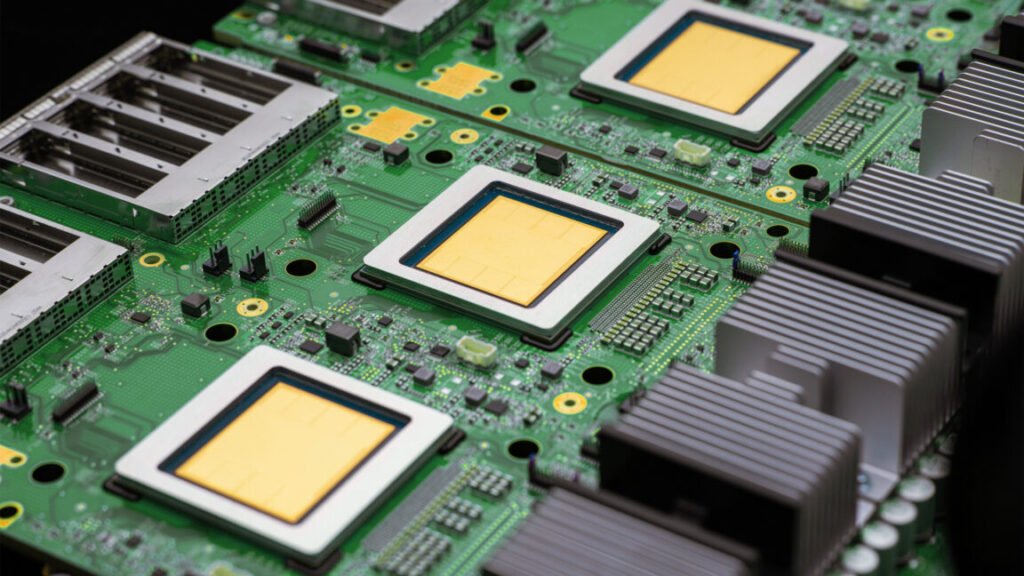

The TPU 8i has less raw power than TPU 8t.

Credit:

Google has tripled the amount of on-chip SRAM for each TPU 8i to 384 MB. This allows the company’s new chips to keep a larger key value cache on the chip, speeding up models with longer context windows. The eighth-gen AI accelerators are also the first from Google to rely solely on Google’s custom Axion ARM CPU host, featuring one CPU for every two TPUs. In Ironwood, each x86 CPU serviced four TPU chips. Google says this “full-stack” ARM-based approach allows for much greater efficiency.

An efficiency play

It makes sense that efficiency is a core part of Google’s new TPU setup. Training and running frontier AI models is expensive, and the return on investment is unclear. Companies are still burning money on generative AI in the hopes that efficiency will turn the corner at some point. Maybe Google’s new TPUs will help get there and maybe not, but the company has made notable improvements.